You know that customer feedback is important. You know that to evaluate the happiness of your customers, you need to know what they truly think about your product or service. And you know that the way to do so is by using a customer survey.

![→ Free Download: 5 Customer Survey Templates [Access Now]](https://no-cache.hubspot.com/cta/default/53/9d36416b-3b0d-470c-a707-269296bb8683.png) The difference between a successful and unsuccessful survey boils down to one thing: The questions asked. Learning how to write survey questions will set you up for success.

The difference between a successful and unsuccessful survey boils down to one thing: The questions asked. Learning how to write survey questions will set you up for success.

Once you know how to create a survey, begin by answering this question: What information are you trying to gather? After you have that answer, it's time to begin learning how to write survey questions.

Writing a Survey: The Basics

The best surveys are simple, concise, and organized logically. They contain a user-friendly balance of short-answer and multiple-choice questions that derive specific information from the participant. Additionally, most questions should be optional and framed in a manner that avoids any bias.

Picking the right questions can be difficult because you want to make sure your survey contains an even balance of different question types.

Pro Tip: HubSpot has Customer Feedback Software where you can create customer surveys, share customer insights with your team, and analyze customer feedback data to improve their experience.

Before I walk you through the steps necessary to write your own survey, let's first recap some important statistics to demonstrate why doing so is crucial.

Customer Service Survey Statistics

-

Monday is the best day to get highest number of complete email surveys for B2B businesses. (Source: CheckMarket).

-

For B2C companies, there isn't one day of the week that's outstandingly better than the others. Tuesdays, Wednesdays, and Fridays all lead to relatively high response rates. However, Thursdays and Sundays can better be avoided. (Source: CheckMarket and SurveyMonkey)

-

Response rates can soar past 85% when the respondent population is motivated, and the survey is well-executed. (Source: People Pulse)

-

Response rates can also fall below 2% when the respondent population is less targeted, when contact information is unreliable, or where there is less incentive or little motivation to respond. (Source: People Pulse)

-

Best practice is to keep your survey as short as possible. Data suggests that if a respondent begins answering a survey, there is a sharp increase in the drop-off rate that occurs with each additional question up to 15 questions. (Source: SurveyMonkey)

-

85% of customers will like to give their feedback when their experience went well with the brand or company, and 81% will give feedback when their experience went poorly. (Source: SurveyMonkey)

-

91% of people believe that companies should fuel innovation by listening to buyers and customers, compared to only 31% who think they should hire a team of experts. (Source: SurveyMonkey)

-

A good survey response rate ranges between 5% and 30%. An excellent response rate is 50% or higher. (Source: Delighted by Qualtrics)

-

The average survey response rates ranged from 6 to 16% among Delighted users, varying by survey channel. iOS surveys scored the highest response rates, followed by website and email surveys. (Source: Delighted by Qualtrics)

-

6% for email surveys

-

8% for website surveys

-

16% for iOS surveys

Types of Survey Questions

- Multiple Choice

- Rating Scale

- Likert Scale

- Ranking

- Semantic Differential

- Dichotomous

- Close-Ended

- Open-Ended

1. Multiple Choice

Best for: Gathering customers' demographic information, product or service usage, and consumer priorities.

Multiple choice survey questions offer respondents a variety of different responses to choose from. These questions are usually accompanied by an "other" option that the respondent can fill in with a customer answer if the options don't apply to them.

Multiple choice survey questions are among the most popular types of survey questions because they're easy for respondents to fill out and the results produce clean data that's easy to analyze and share.

Single-Answer

Single-answer multiple choice questions only allow respondents to select one answer from a list of options. These frequently appear online as circular buttons respondents can click.

Multiple-Answer

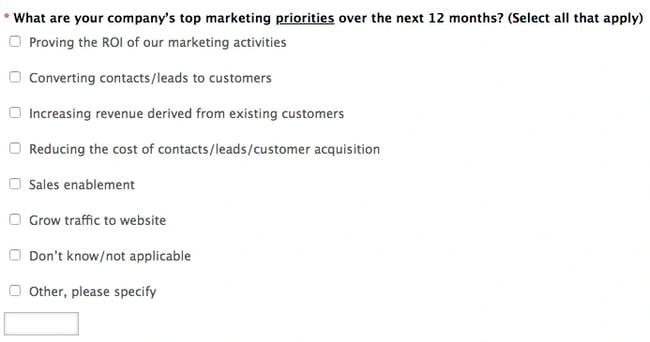

Multiple-answer multiple choice questions allow respondents to select all responses that apply from a list of options. These frequently appear as checkboxes respondents can select.

2. Rating Scale

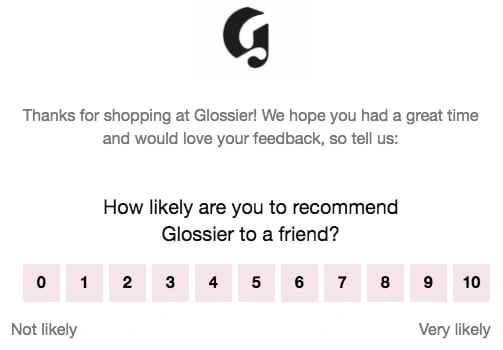

Best for: Gauging your company's Net Promoter Score® (NPS), an example of a common rating scale survey question.

Rating scale questions (also known as ordinal questions) ask respondents to rate something on a numerical scale assigned to sentiment. The question might ask respondents to rate satisfaction or happiness on a scale of 1-10 and indicate which number is assigned to positive and negative sentiment.

Rating scale survey questions are helpful to measure progress over time. If you send the same group a rating scale several times throughout a time period, you can measure if the sentiment is trending positively or negatively.

3. Likert Scale

Best for: Evaluating customer satisfaction.

Likert scale survey questions evaluate if a respondent agrees or disagrees with a question. Usually appearing on a five or seven-point scale, the scale might range from "not at all likely" to "highly likely," or "strongly disagree" to "strongly agree."

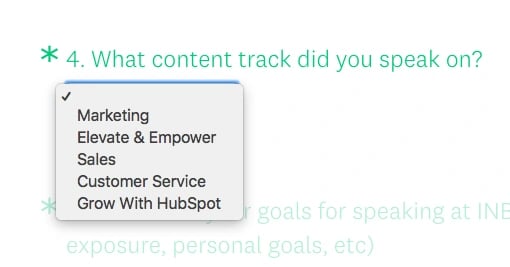

4. Ranking

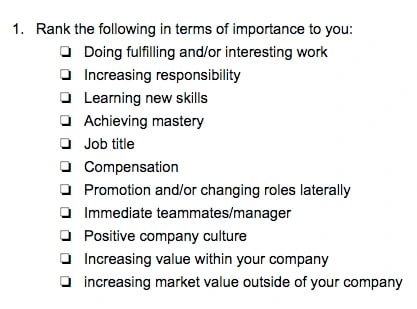

Best for: Learning about customer needs and behavior to analyze how they're using your product or service and what needs they might still have that your product doesn't serve.

Ranking survey questions ask respondents to rank a variety of different answer options in terms of relative priority or importance to them. Ranking questions provide qualitative feedback about the pool of respondents, but they don't offer the "why" behind the respondents' choices.

5. Semantic Differential

Best for: Getting clear-cut qualitative feedback from your customers.

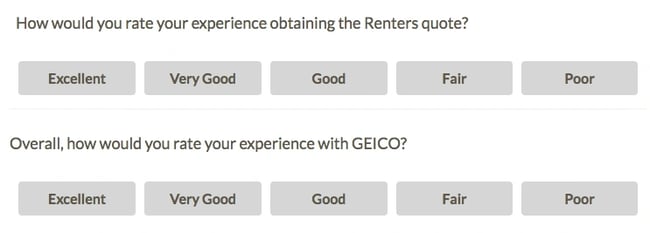

Semantic differential survey questions also ask respondents to rate something on a scale, but each end of the scale is a different, opposing statement. So, instead of answering the question "Do you agree or disagree with X?" respondents must answer questions about how something makes them feel or is perceived by them.

For example, a semantic differential question might ask, "On a scale of 1 to 5, how would you evaluate the service you received?" with 1 being "terrible" and 5 being "exceptional." These questions are all about evaluating respondents' intuitive responses, but they can be tougher to evaluate than more cut-and-dry responses, like agreement or disagreement.

Likert Scale vs. Semantic Differential

Both Likert scale and semantic differential questions are asked on a scale respondents have to evaluate, but the difference lies in how the questions are asked. With Likert scale survey questions, respondents are presented with a statement they must agree or disagree with. With semantic differential survey questions, respondents are asked to complete a sentence, with each end of the scale consisting of different and opposing words or phrases.

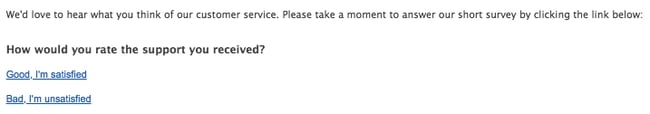

6. Dichotomous

Best for: Getting concise data that's quick and simple to analyze.

Dichotomous survey questions offer only two responses that respondents must choose between. These questions are quick and easy for the respondents to answer and for you to analyze, but they don't leave much room for interpretation, either.

7. Close-Ended

Best for: Gathering quantitative data with rating, ranking, and multiple choice questions.

Close ended survey questions are questions that have a set number of answers that respondents must choose from. All of the questions above are examples of close-ended survey questions. Whether the choices are multiple or only two, close-ended questions must be answered from a set of options provided by the survey creator.

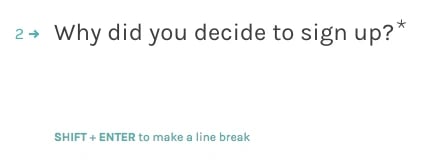

8. Open-Ended

Best for: Gathering qualitative feedback.

Open-ended questions are accompanied by an empty text box where the respondent can write a customer answer to the question.

This qualitative feedback can be incredibly helpful in understanding and interpreting customer sentiment and challenges. However, because it isn't quantitative, it isn't the easiest data to interpret if you want to analyze trends or changes in opinions. You need humans to interpret qualitative feedback for sentiment, tone, and correctness.

We suggest including open-ended questions alongside at least one other closed-ended question to collect data you can analyze and forecast over time, as well as those valuable qualitative insights straight from your consumers.

Different Types of Survey Questions to Use: Examples

1. Multiple Choice

Here's an example of a single-answer multiple choice question:

-1.webp?width=650&height=373&name=singleanswersurveyexample%20(1)-1.webp)

Here's an example of a multiple-answer multiple choice question:

Image Source

2. Rating Scale

Here's an example of a rating scale survey question in a frequently-used format: NPS.

3. Likert Scale

Here's an example of a five-point Likert scale:

Here's an example of a seven-point Likert scale:

4. Ranking

Here's an example of a ranking survey question:

5. Semantic Differential

Here are examples of semantic differential survey questions:

6. Dichotomous

Here's an example of a dichotomous survey question:

7. Close-Ended

Here's an example of a close-ended, multiple-choice survey question:

8. Open-Ended

Here's an example of an open-ended question you might include in a survey:

How to Write Survey Questions

- Write unbiased survey questions.

- Don't write loaded questions.

- Keep survey question phrasing neutral.

- Don't use jargon.

- Avoid using negative questions.

- Don't write double-barreled questions.

- Encourage respondents to answer all questions.

- Give respondents an option to skip.

- Keep questions clear and concise.

- Test your survey.

You have the must-know survey question basics down — now it's time to learn how to write survey questions. Don't worry — it's more intimidating than it sounds. I know you're going to do a great job.

1. Write unbiased survey questions.

Leading survey questions are questions that suggest what answer the respondent should select.

Here's an example of a leading survey question: "What's your favorite tool that HubSpot offers?"

This is a leading question because the survey respondent might not like using HubSpot, so a list of different software tools might not accurately reflect the true answer. This question could be improved by offering an answer that allows the respondent not to have a favorite tool.

These questions aren't objective and will lead your respondents to answer a question in a certain way based solely on the wording of the question, making the results unreliable. To avoid this, keep survey questions clear and concise, leaving you little room to lead respondents to your preferred answer. If you are unsure if you have a biased question on your hands, have someone unfamiliar with the survey or subject matter review it and get their feedback.

2. Don't write loaded questions.

Along the same lines, loaded questions force survey respondents to choose an answer that doesn't reflect their opinion, thereby making your data unreliable.

Here's an example of a loaded question: "Where do you enjoy watching sports games?"

This is a loaded question because the respondent might not watch sports games. The survey question would need to include an answer option along the lines of "I don't watch sports games" in order to be objective.

Loaded questions typically contain emotionally charged assumptions that can push a respondent toward answering in a specific way. Remove the emotion from your survey questions by keeping them (mostly) free of adjectives.

3. Keep survey question phrasing neutral.

You know what they say about assuming. Don't build assumptions about what the respondent knows or thinks into the questions -- rather, include details or additional information for them.

Here's an example of a question based on assumptions: "What brand of laptop computer do you own?"

This question assumes the respondent owns a laptop computer when they could be responding to the survey via phone or a shared device. So, to amend the example question above, the answer options would have to include "I don't own a laptop computer" to avoid assumptions.

Instead, keep question phrasing neutral, and leave different options to account for variability in your survey respondents.

4. Don't use jargon.

Jargon can make respondents feel like they aren't in the loop. Use clear, straightforward language that doesn't require a respondent to consult a dictionary.

Here's an example of a jargon-y survey question: "What's your CAC:LTV ratio?"

This survey question assumes the respondent is familiar with both acronyms as well as the ratio as a business metric, which may not be the case. If your survey question includes acronyms, abbreviations, or any words specific to your lexicon, simplify it to ensure greater understanding. This survey question should unpack the definitions of these terms and provide an answer option that accounts for the respondent not having that data on hand. Or, better yet, rewrite the question to skip acronyms.

5. Avoid negative questions.

Negative questions, once again, lead your respondents in one direction instead of another. And that's frustrating. It can make your respondents feel defensive, which is never good.

Here's an example of a survey question that wouldn't be successful on a survey: "Do you not like using Google?"

These questions also make it tough for you to analyze the results when you get the survey back, too. Think about it: How can you know which statement the respondent agrees with if the question is structured in a confusing manner?

6. Don't write double-barreled questions.

Double-barreled survey questions ask two questions at once. If you present two questions at the same time, respondents won't know which one to answer -- and your results will be misleading.

Here's an example of a double-barreled survey question: "Are you satisfied or unsatisfied with your compensation and career growth at your current employer?"

If the respondent is happy with their compensation but unhappy with their career growth, they won't know if they should select "Satisfied" or "Unsatisfied" as their answer.

Instead, ask these distinct thoughts in the format of two distinct survey questions. That way, respondents won't be confused, and the resulting data will be clear for you.

7. Encourage respondents to answer all questions.

You're taking a survey to get people's opinions, and it's tough when they provide you with something like "No comment" or "Not relevant" for an answer. To avoid this, supply the participant with answer options that account for every possibility to reflect the best possible information. Use a more specific answer option, like "I'm not sure," to give you a better idea of your survey base.

That brings me to my next point…

8. Always provide an alternative answer.

The goal of your survey should be to obtain customer feedback. However, you don't want this process to come at the expense of your customers' comfort. When asking questions, be sure to include an "I prefer not to answer this question" option. While you'll forfeit the answer, customers won't feel forced to give up sensitive information. Plus, you'd rather have respondents skip one question than quit the survey completely.

The other benefit of including this option is that you can measure your ability to write a survey. If customers are continuously leaving questions blank, you'll know it's the phrasing or structure of your survey. You can then reassess your survey's layout and optimize it for engagement.

9. Keep questions clear and concise.

The best surveys are short and take only a few minutes to complete. In fact, studies show that your completion rate can drop up to 20% if your survey takes more than seven or eight minutes to finish. That's because customers have busy schedules and will be more interested in your survey if it's a shorter time commitment.

You can also display the estimated completion time before respondents begin so they're reassured it's brief enough for them to stick around.

10. Test your survey.

Testing your questions is one of the best ways to see whether or not your survey is effective with your customer base. You can release early versions of your survey to see how participants react to your questions. If you have low engagement or poor feedback, you can tweak your survey and correct user roadblocks. That way, you can make sure your survey is perfect before it's sent to all of your stakeholders.

Start Writing Better Survey Questions

There you have it: You officially know the steps necessary to learn how to write survey questions. Keep these guidelines handy as you begin creating your first survey so you can refer to the suggestions and ensure you don't make any survey question faux pas.

Net Promoter, Net Promoter System, Net Promoter Score, NPS and the NPS-related emoticons are registered trademarks of Bain & Company, Inc., Fred Reichheld and Satmetrix Systems, Inc.

Editor's note: This post was originally published in April 2018 and has been updated for comprehensiveness.

![How Automated Phone Surveys Work [+Tips and Examples]](https://blog.hubspot.com/hubfs/phone-survey.webp)

![Leading Questions: What They Are & Why They Matter [+ Examples]](https://blog.hubspot.com/hubfs/leading-questions-hero.webp)