When Google made its debut announcement its Lens technology in ay 2017, I was equal parts skeptical and giddy.

The technology promised that if users saw something they didn't recognize -- a bird, a plant, or a piece of clothing they admired -- all they would have to do point their respective cameras at it to get the desired details. It was a promising development in technology ... if it ever came to fruition.

With Google Lens, your smartphone camera won’t just see what you see, but will also understand what you see to help you take action. #io17pic.twitter.com/viOmWFjqk1

— Google (@Google) May 17, 2017

For Pixel users, Lens did materialize only a few months later, in October 2017. But we iPhone users had to wait a bit longer -- and at long last, Lens finally became available to us within Google's standalone iOS app.

Naturally, I had to put it to the test.

I decided to see how well Lens technology works in five different categories -- text, fashion and clothing, plants, animals, and products -- to see how well it could recognize various words, objects, and entities.

Text and Translation

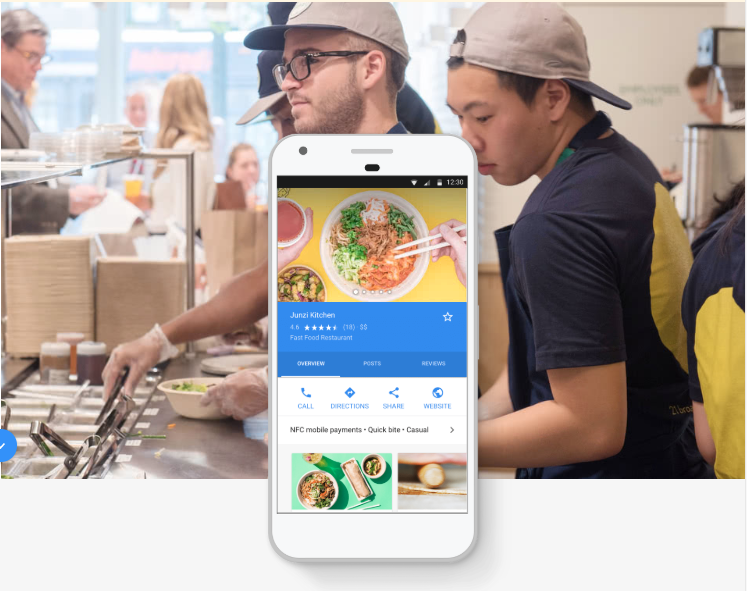

One area of visual recognition promised by Lens is text, allowing users to hover over written words to get more insight on dishes listed on menus, add events to their calendars, get directions somewhere, call a phone number, or translate words.

First, I began with food and beverage items. Without visiting a restaurant, a physical menu was difficult to find, so we tried the next best thing: a written cookbook recipe.

This part of the experiment mostly ran smoothly. As per the video above, Lens was immediately able to recognize the cookbook being used as the subject, and was also able to pick out certain words and ingredients -- even recognizing an image of an artichoke.

And, I got a chance to see the translation capabilities in realtime, when Lens fittingly translated the ingredient "olive oil" to Greek.

An unsteady hand could cause some issues, however. During one text-capture, the text was too blurry for Lens to recognize the correct word, and at one point, it mistook certain text from the recipe's instructions for the name of a cultural landmark.

Fashion and Clothing

As a bit of recovering Fashion Week junkie, I've always wondered: Wouldn't it be cool if I could hold my phone up to a ready-to-wear look during a runway show, and instantly get information about the fabric, color, style, and more?

Lens boasts the ability to do that -- or at least, something very similar, with image recognition technology that can show users similar looks for clothing, accessories, or items of furniture.

Since I wasn't quite invited to Fashion Week this year, I wasn't able to put that exact scenario to the test -- but I was able to experiment with it using certain items of clothing and furniture in my own closet and home.

I began with a red jumpsuit:

While Lens did recognize that I was trying to capture a red item of clothing, it fell short on some of the finer details -- interpreting the outfit to be either a dress or a skirt.

It did slightly better with a chair, though it didn't identify the original brand or manufacturer.

Finally, I decided to see how the Lens object detection worked on a free-standing punching bag, where the results were a bit disappointing -- only displaying information about the manufacturer's brand, and image matches for what I was trying to capture.

Plants

I have a confession to make: I've unwittingly killed every plant I've tried to bring into my home -- making it a bit difficult to test the Lens' recognition capabilities on our green friends.

Luckily, I do have a refrigerator full of vegetables -- and those count as vegetables, right?

I decided to see how well Lens would do with recognizing a stalk of celery, as well as a container of fresh basil.

Yikes. Lens really struggled with this one, and couldn't actually identify the celery or the basil. It did turn up similar image results -- and, interestingly, recognized the barcode on the container of basil, yielding an option to buy it on grocery shopping app Instacart.

But when it came to actually identifying the plant life -- Lens got it wrong both times, misinterpreting the celery as the legume apios, in addition to mistakenly labeling the basil as both a houseplant and a flowering plant.

Animals

Finally -- my favorite category. Being that he's a shelter pet, no one has ever been entirely sure of my dog's exact breed, even if he is adorable.

So, how did Lens categorize my four-legged friend?

In the interest of honesty, I tried to get more information on my dog's breed using Lens multiple times, in vain hopes of it telling me that he is, in fact, the dachshund-spaniel mix I've believe him to be for over three years.

But, alas -- Lens yielded the breed result of Markiesje multiple times -- leading me to think that its dog breed recognition technology could use some work, or that I'm about to have empathetic identity crisis on behalf of my canine companion.

General Products and Objects

For my final experiment, I decided to run around and have an absurd amount of time-wasting fun with Lens. Could it recognize a pen? A lint roller? My face?

Here's how that test turned out. And if you wish to have your own procrastinatingly good time, check out Lens on Google's iOS app here, or on Android devices.

Featured image credit: Google // Music credit: Song -- "Benny Hill Theme"/Artist -- James Rich, Randolph Boots, Leslie Mark Hill/Writers -- Boots Randolph, James Rich/Licensed to YouTube (source) by Believe Music; Sony ATV Publishing, PEDL, CMRRA, and 16 Music Rights Societies

![What Does a World With Zero Search Results Looks Like? [New Data]](https://53.fs1.hubspotusercontent-na1.net/hubfs/53/google-zero-search-results-impact.jpg)