Fictional references aside, let’s explore what exactly constitutes a bot, and how we arrived at an era in which -- whether you want to admit it or not -- bots have become essential to some of our most routine tasks.

What Are Bots?

A bot is a type of automated technology that’s programmed to execute certain tasks without human intervention. That is, it might be prompted by a human to perform an action, but it can carry it out on its own.

Bots are one form of artificial intelligence (AI), which my colleague Mimi An explains brilliantly -- it’s “technology that can do things humans can uniquely do, whether it’s talk, see, learn, socialize, and reason.” So many of the bots with which we’re most familiar today, like Siri and Google Assistant, are artificially intelligent enough to answer questions like a human being would. Have a look at the latter:

Most of us use bots regularly -- 55% of us use voice assistant technology, for example, on a daily or weekly basis. They’ve become common solutions for a number of needs, like customer service, or you might have seen one used as a promotion that you didn’t even realize was a bot. How does it work? We’ll get to that. First, let’s see where this all began.

A Brief History of Where Bots Come From

The 1950s

The Turing Test

In 1950, computer scientist and mathematician Alan Turing developed the Turing Test, also known as the Imitation Game. Its most primitive format required three players -- A, B, and C.

- Player A was a machine and player B was a human.

- Player C, also a human, was the interrogator, and would enter questions into a computer.

- Player C received responses from both Players A and B.

- The trick was, Player C had to determine which one was human.

But there was a problem. At the time, databases were highly limited, and could therefore only store a certain amount of human phrases. That meant that the computer would eventually run out of answers to give Player C, eliminating the challenge and prematurely ending the test.

The Test Is Still Around -- and Cause for Controversy

In 2014, the University of Reading hosted Turing Test 2014, in which an entire panel of 30 judges filled the position of Player C. If all of the judges were convinced that the machine’s answers were actually from a human over 30% of the time, the machine would be considered to have “beaten” the test -- which is exactly what happened. An AI program named Eugene Goostman, developed to simulate a 13-year-old boy in Ukraine, had 33% of the judges convinced that he was human.

According to the university, it was the first occasion on which any AI technology was said to have passed the Turing Test. But those results were met with praise and criticism alike -- the contention was even a topic on NPR’s popular “All Things Considered”. Many were skeptical of Eugene Gootsman’s capabilities and asking if they were really any more advanced than the most primitive forms of AI technology.

Regardless, Turing is rightfully considered a pioneer in this space, as he may have set into motion a series of events that led to AI as we know it today. Only five years later, the 1956 Dartmouth Summer Research Project on Artificial Intelligence was run by mathematics professor John McCarthy -- who is said to have invented the term “artificial intelligence” -- which led to AI becoming a research discipline at the university.

In 1958, while at MIT, McCarthy went on to develop LISP -- a programming language that became the one most preferred for AI and, for some, remains so today. Many major players in this space, including computer scientist Alan Kay, credit LISP as the “greatest single programming language ever designed.”

The 1960s

ELIZA

One of the most significant AI developments of the 1960s was the development of ELIZA -- a bot, named in part for the Pygmalion character, whose purpose was to simulate a psychotherapist. Created in 1966 by MIT professor Joseph Weizenbaum, the technology was limited, to say the least, as was ELIZA’s vocabulary. That much was hilariously evidenced when I took it for a spin -- which you can do, too, thanks to the CSU Fullerton Department of Psychology.

But Weizenbaum knew that there was room for growth, and even likened ELIZA himself to someone “with only a limited knowledge of English but a very good ear”.

“What was made clear from these early inventions was that humans have a desire to communicate with technology in the same manner that we communicate with each other,” Nicolas Bayerque elaborated in VentureBeat, “but we simply lacked the technological knowledge for it to become a reality at that time.”

That wasn’t helped by the 1966 ALPAC report, which was rife with skepticism about machine learning and pushed for a virtual end to all government funding to AI research. Many blame this report for the loss of years’ worth of progress, with very few significant developments taking place in the bot realm until the 1970s -- though the late 1960s did see a few, such as the Stanford Research Institute’s invention of Shakey, a somewhat self-directed robot with “limited language capability”.

The inaugural International Joint Conferences on Artificial Intelligence Organization was also held in 1969 -- which still takes place annually -- though any noticeably revitalized interest or attention paid to AI would be slow to follow.

The 1970s - 1980s

Freddy

In the early 1970s, there was much talk around Freddy, a non-verbal robot developed by researchers at the University of Edinburgh that, while incapable of communicating with humans, was able to assemble simple objects without intervention. The most revolutionary element of Freddy was its ability to use vision to carry out tasks -- a camera allowed it to “see” and recognize the assembly parts. However, Freddy wasn’t built for speed, and it took 16 hours to complete these tasks.

Bots in Medicine

The 1970s also saw the earliest integrations of bots in medicine. MYCIN came about in 1972 at Stanford Medical School, using what was called an expert system -- asking the same types of questions a doctor would to gather diagnostic information and referring to a knowledge base compiled by experts for answers -- to help identify infectious disease in patients.

That was followed by the creation of the similar INTERNIST-1 in the mid-1970s at the University of Pittsburgh -- which, it’s worth noting, relied on McCarthy’s LISP -- which then evolved into Quick Medical Reference, a “decision support system” that could make multiple diagnoses. This technology has since been discontinued.

Larger Conversations Around Bots

The early 1980s saw a continuation of larger meetings on AI, with the first annual Conference of the American Association of Artificial Intelligence taking place at the start of the decade. That decade also saw the debut of AARON, a bot that could create original both abstract and representational artwork, which was exhibited -- among other venues -- at the Tate Gallery, Stedelijk Museum, and San Francisco Museum of Modern Art.

Despite all of the very recent talk of self-driving cars, the technology behind them actually dates back to the 1980s, as well. In 1989, Carnegie Mellon professor Dean Pomerleau created ALVINN -- “Autonomous Land Vehicle In a Neural Network” -- a military-funded vehicle that was powered to limitedly self-operate with computer control.

The 1990s - early 2000s

Consumer-Facing Bots

The following decade saw a major shift in bots in becoming increasingly consumer-facing. Some of the earliest computer games are often thought of as primitive consumer-facing bots, especially those in which players had to type in commands to progress -- though no one can really seem to remember what any of them were called.

But one additional example was the 1996 Tamagotchi. Though not specifically labeled as a bot, the interaction was similar -- it was a handheld, computerized “pet” that required digitized versions of care that a real dependent might require, like feeding, playtime, and bathroom breaks.

If nothing else, robots were becoming smarter and more capable in the 90s, to the extent that they were even able to independently compete in athletic matches -- one of the most notable instances was the 1997 first official RoboCup games and conference, in which 40 teams comprised exclusively of robots competed in tabletop soccer matches.

SmarterChild and More

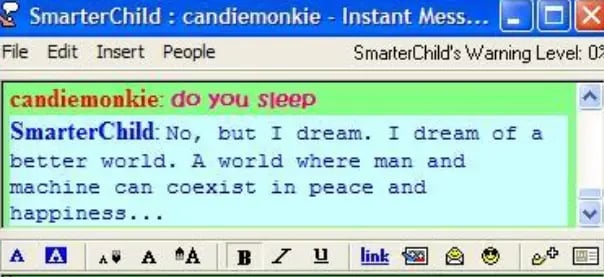

The year 2000 was somewhat pivotal in the realm of humans speaking to bots. That's the year SmarterChild -- "a robot that lived in the buddy list of millions of American Online Instant Messenger (AIM) users," writes Ashwin Rodrigues for Motherboard -- was released. It was pre-programmed with a backlog of responses to any number of queries, but on some level, was really a primitive version of voice search tools like Siri.

"Google had already been out and Yahoo! was strong, but it still took a few minutes to get the kind of information you wanted to get to,” Peter Levitan, co-founder and CEO of ActiveBuddy -- the company behind SmarterChild -- told Motherboard. "You said, ‘what was the Yankee’s score last night,’ and as soon as you hit enter, the result popped up." It was technology that he says, at the time, "blew people’s minds."

And while the technology was, at first, enough for Microsoft to acquire ActiveBuddy, it wasn't long before it also discontinued to the technology.

The early 2000s also saw further progress in the development of self-driving cars, when Stanley, a vehicular bot invented at Stanford, was the first to complete the DARPA challenge -- a 142-mile-long course in the Mojave desert that had to be traversed in under 10 hours.

Bayerque, for his part, sees the 2000s as the real catalyst for major bot progression in the increasing popularity of smartphone use. It began, he claims, when developers had the “obstacle of truncating their websites to fit onto a much smaller screen,” which ultimately led to a conversation about usability and responsiveness. “Could we find a better interface?” he subsequently asked. As it turns out, we could -- one with which we ultimately began interacting as if it were another person.

Enter voice search.

2011 and Beyond

With regard to Bayerque’s credit to smartphone use, it can be conjectured that voice search gave rise to the rapid growth of bots among consumers due to their introduction of AI personal assistance. Prior to that, one of the few household names in AI was iRobot, which actually began as a defense contractor and eventually evolved into the manufacturing of what the Washington Post calls a “household robot” -- namely, the Roomba.

But people couldn’t communicate with Roomba on a verbal level. Sure, it could clean the floors, but it couldn’t really help with anything else. So when Siri was introduced in 2011 and could answer questions -- on demand, right from your phone, and without having to visit a search engine -- the game changed. There was new technology to improve upon and, therefore, a newly eager market for assistive voice technology.

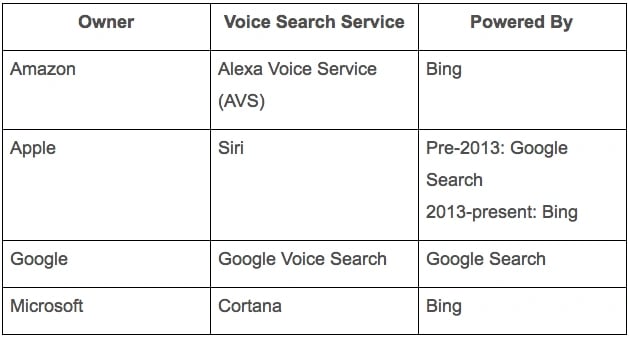

Today, we have four major pillars of voice search:

It didn’t take long for manufacturers to combine voice search with the Internet of Things -- in which things like home appliances, lighting, and security systems can be controlled remotely. Amazon released its Echo only three years after Siri debuted, using a bot named Alexa to not only answer your questions about the weather and measurement conversions, but also to handle home automation.

Google, of course, had to enter the space, and did so with its 2015 release of Google Home.

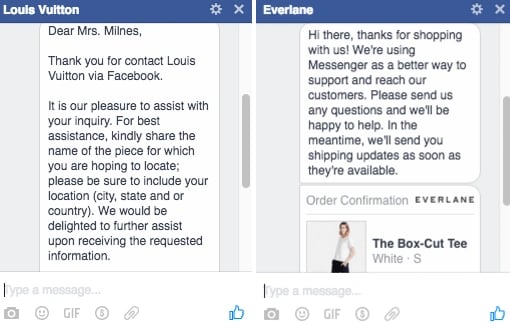

The key theme here has really become service. At this point, bots aren’t just being used for voice search and other forms of personal assistance -- brands have also started implementing them for customer service. In April 2016, Facebook announced that its Messenger platform could be integrated with bots -- which would “provide anything from automated subscription content like weather and traffic updates, to customized communications like receipts, shipping notifications, and live automated messages all by interacting directly with the people who want to get them.” Here’s an example of how two major brands -- Louis Vuitton and Everlane -- use the platform for customer service:

We’re even seeing a revamping of technology that was pioneered by MYCIN and INTERNIST-1 with bots like Healthtap, which uses -- you guessed it -- a database of medical information to automatically answer user questions about symptoms.

But even all of the developments listed here merely comprise the tip of the iceberg. Just look at this additional research on bots from An -- there are currently so many uses for bots, that it’s virtually (if you’ll excuse the pun) impossible to list them all in one place.

...And It's Not Over Yet

Just this month, an announcement was made about yet another personal assistant bot, Olly. As Engadget described it, it shares most of the same capabilities as the Echo and Google Home, but it has a better personality -- yours.

Yes, that’s correct -- we’re now working with bots that are trained to observe your behavioral patterns and can listen to the way you speak, and begin to imitate it. Whether it’s the food you order, the books you read, the jargon you use, Olly can figure it out and take it on. Cool, or creepy?

This latest development just goes to show that what used to be limited to science fiction is very quickly becoming reality. And no matter how you feel about it -- scared, excited, or both -- nobody can accuse it of being dull. We’ll be keeping an eye on the growth of bots, and look forward to sharing further developments.

What are your favorite facts about the evolution of bots? Let us know in the comments.

Chatbots

.jpg)

![I tested the top 14 AI chatbots for marketers [data, prompts, use cases]](https://53.fs1.hubspotusercontent-na1.net/hubfs/53/best-ai-chatbot-1-20260414-6792976.webp)