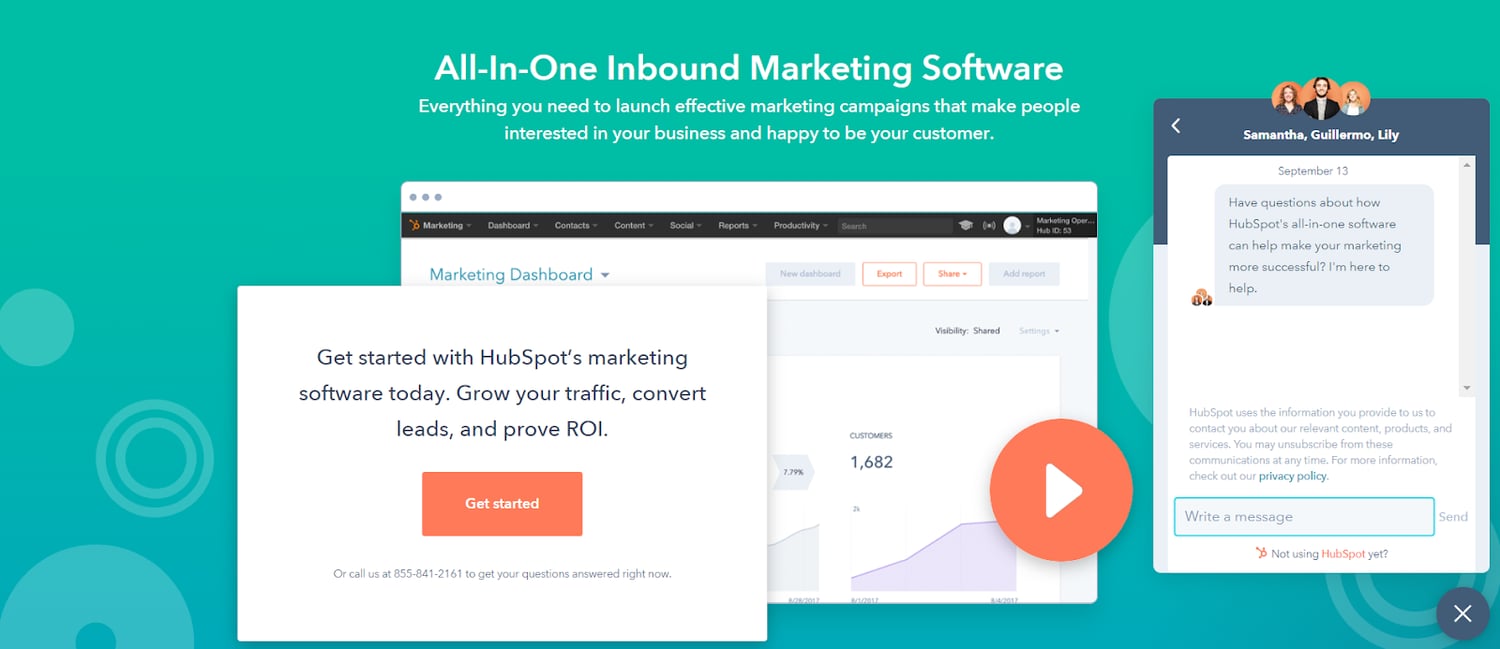

When my marketing team at HubSpot connected with the sales team to discuss the issue, we recognized live chat alone no longer suited our needs. To appropriately communicate with each prospect and create that highly personalized experience our website visitors crave, we needed to scale our sales team's productivity with a chatbot.

Here, we'll explore why we decided to build a chatbot, how we designed the experience, and why it might be an outstanding solution for your own business in 2019 — and beyond.

For an in-depth overview on how chatbots can elevate your own landing pages and help your business grow, check out our video guide.

Why Live Chat Didn't Work For Us

When the live chat sales team reached out to us, we knew we needed to make the team more efficient.

Live chat in-and-of-itself wasn't a problem — but, unfortunately, we weren't tailoring the conversations to the visitor and their problems. We knew sifting through each and every visitor's inquiry was the right thing to do, since it helped solve for the customer and answer any prospect's questions.

But, since they all fell under the same bucket, it became increasingly difficult for the sales team to keep up. And for the majority of people who didn't leave us with a way to get back in touch, they were gone forever.

At one point, over a third of the people who chatted with us never heard anything back.

Additionally, hundreds of the chats we received each month were from users who simply needed product support. This ate part of our sales team's valuable bandwidth, making it harder for them to get in touch with site visitors who actually needed to speak with a sales rep.

Ultimately, this poor experience added unnecessary friction for our site visitors and sales reps.

Why We Decided to Build a Chatbot

After some internal discussion, we set out to build a chatbot that would engage with visitors, triage them, and get them to the right place, sooner. This would be a win-win for both visitors to our site, and the sales team.

To figure out what this chatbot should do, we first looked at live chat transcripts. They're an invaluable resource when doing conversational marketing, since you can hear in the prospect's own words what they want to do. There are technical ways to classify chats (like natural language processing), but a qualitative approach is fine to start.

We then bucketed intents from live chat into three buckets — sales, support, and a catch-all "other". To start the chatbot, we prompted people to select the topic that best matched their intent.

After speaking with our support team, we learned that getting technical help with a product while on the website can be tricky. There are rich and thorough resources better-suited for getting these kinds of answers. So, rather than keeping someone in the chatbot, we used what we know about them in the CRM to point them in the right direction. The chatbot would look at their contact record, and then serve up a contextual web page depending on the products they were using.

Even though our prospects couldn't connect with a human right away, we set them up for better long-term success.

Next, we recognized a large percentage of the people who interacted with live chat did, in fact, want to talk to our sales team. But that didn't mean trying to connect them to a human right away was efficient for either group.

We interviewed and observed the sales team to understand their experience with chat. Right away, we noticed each sales rep needed to know three key facts about the user to tailor the conversation to their business — name, email, and website.

The sales team said in an ideal world, they wanted this context before chatting with a prospect — an issue we knew a chatbot could solve. We programmed the chatbot to collect this information in a natural, contextual way.

Additionally, we knew a prospect would become frustrated if they needed to repeat themselves — in fact, NewVoiceMedia found it to be the most frustrating aspect of customer experience. To combat this issue, we again checked the CRM, and if you'd ever filled out a HubSpot form, we'd skip the questions altogether.

Collecting this information up-front enabled the sales team to spend more time selling, and less time chasing down email addresses.

Our Results

At the beginning of this project, our main goal was to deflect support-related chats away from our sales team. But, even as someone who has been building chatbots for almost four years, I'll admit — what we saw was shocking.

When compared to live chat, 75% more people engaged with the chatbot.

Additionally, over 55% of people gave real answers to the basic qualifying questions and reached a human. While the drop-off there may seem steep, sifting out low-intent people proved incredibly helpful for the sales team.

Ultimately, our chatbot's success came in a few ways — first, the chatbot anticipated the next step of the conversation and used quick replies to drive a visitor forward. Opening up a chat and clicking a contextual button has less friction than typing in a text field — now, people didn't need to come up with the first message themselves.

It makes sense that multiple choice options drive more engagement. Think of it this way — when you meet someone new, often the hardest part of the conversation is the beginning, when you're trying to think of something to say. But once the conversation starts rolling, things become smoother.

The same is true for our prospects when they engage with a chatbot versus live chat.

Additionally, 9% of people who chatted with the bot needed support help — we were able to deflect those visitors away from humans in a helpful way, serving up the best place to get answers to their questions quickly.

It's important to note when designing conversations, the hardest and most overlooked aspect is to write like a human. As consumers, we rarely process in our heads that we want to "talk to sales". Instead, we only know we want to talk about "products I'm not yet using". Writing with the "jobs to be done" in mind is a great philosophy for conversational design.

Takeaways for Your Business

At the end of the experiment, I've found a few takeaways that can help you and your business succeed, as well.

First, chatbots are a great opportunity to meet your visitors where they are. If you want to get started with a chatbot, look at chat transcripts, or interview your sales team to understand the types of questions people typically ask. Bucket those questions into a few critical categories.

Also, it's critical you and your team think through and anticipate the best way to help those various buckets of people. The more you can personalize — perhaps through your CRM — the better.

Ultimately, using a chatbot to get people the help they need is a huge win for both your prospects and your business. In our case, site visitors now had the best resources for their support issues, and our sales team was able to capture more qualified leads.

The best part? Both sides did this in a frictionless, efficient way.

Editor's note: This post was originally written in February 2019 and has been updated for comprehensiveness.

Chatbots

![I tested the top 14 AI chatbots for marketers [data, prompts, use cases]](https://53.fs1.hubspotusercontent-na1.net/hubfs/53/best-ai-chatbot-1-20260414-6792976.webp)

.jpg)