By reducing network latency issues, you can ensure that user requests — whether for online purchases or internal links — are met as quickly as possible.

Such speed is critical to providing a seamless user experience on your website.

In this post, I’ll discuss what latency is and how it works. Then, I’ll walk you through several ways to improve yours. To be fair, the subject of latency is a bit technical, so I’ll use as many analogies as possible to make it easier to understand.

So watch out for those traffic analogies.

Table of Contents

- What Is Latency?

- How to Improve Latency

- How Does Latency Work?

- Why Improve Latency? (And Why It Matters to Businesses)

What Is Latency?

Latency is the delay between the browser sending a request to the server and the server processing that request on a network or internet connection.

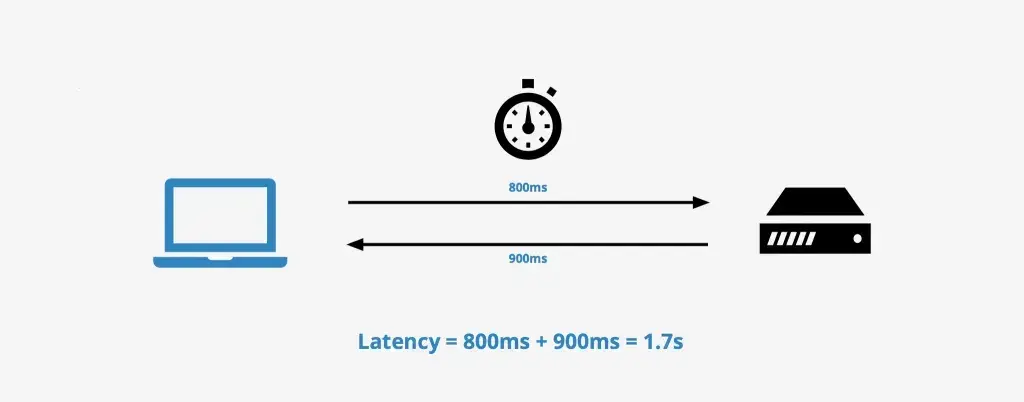

Network latency is usually measured in milliseconds (ms). For example, if a browser sends a request to a server in 800ms and receives a response 900ms later, the latency would be 1.7 seconds.

Think of latency as being stuck in traffic. Just as it’ll take longer to reach your destination because of traffic, data packers or requests experience delays if the network is congested.

The longer the delay, the higher the chances of people leaving your website. As such, you must do all you can to maintain low latency or reduce high latency.

Now that you understand what latency is, let’s explore how it works in practice.

How Does Latency Work?

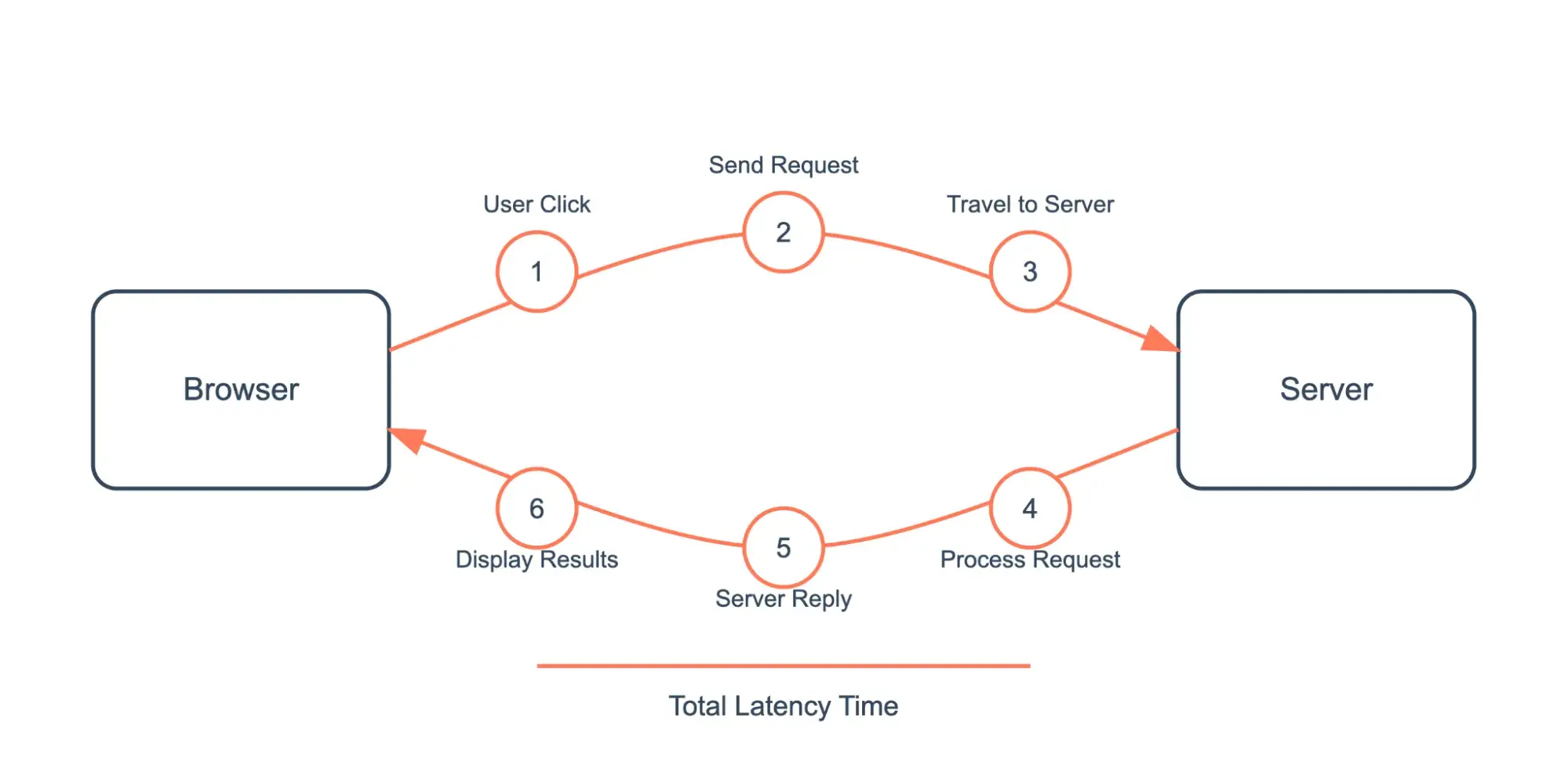

Once a user makes a request, several steps must happen before getting a response.

Take the following example. Say a user is browsing an ecommerce website and clicks a category. To display items from that category on the user’s browser, the following has to happen:

- The user clicks on the category.

- The user’s browser sends a request to the server of the ecommerce website.

- The request travels to the site’s server with all the necessary information. Transmitting this information takes time; the more information sent, the more time it takes to transmit.

- The server gets the request.

- The server either accepts or rejects the request before processing it. Each of these steps takes time. The amount of time depends on the server’s processing capacity and the amount of information being processed.

- The server replies to the end user with the necessary information about their request.

- The user’s browser gets the reply and displays the product category.

Steps 1-4 represent the first part of the latency cycle, and steps 5-7 represent the second part.

To get the total latency resulting from the request, you add up all the time increments, starting from when the user clicks on the category to when they see those products.

How do you measure latency?

You can measure latency in two ways.

- Round Trip Time (RTT). Round trip time is the time it takes for the request to travel from the browser to the server and back. During a speed test, a ping is used to measure the RTT. A ping sends several requests — usually four — to a specified IP address or domain and records the time taken for each response. A low RTT or ping rate signifies a strong network performance, while higher values indicate congestion or other connectivity issues.

- Time to First Byte (TTFB). TTFB is the time between the browser sending a request to the server and receiving its first data byte. Think of TTFB as how long it takes a delivery man to drop a parcel at the doorstep after reaching his destination.

While latency associated with a basic HTML page or other single assets may seem inconsequential, it can significantly impact the user experience for an entire website where multiple requests for HTML pages, CSS, scripts, and media files occur.

What causes latency?

These are six of the most common causes of network latency.

1. Distance

The leading cause of latency is distance. The longer the distance between the browser making the request and the server responding to that request, the more time it’ll take the requested data to travel there and back.

That’s why website visitors in the U.S. will get responses from a data center in Council Bluffs, Iowa (one of SiteGround’s data center locations) sooner than website visitors in Europe, for example.

I like to think of this as driving from one city to another. If you live in New York and want to visit Los Angeles, the distance means your journey will take significantly longer than if you were just driving to New Jersey. Similarly, data traveling long distances experience higher latency.

2. Network Congestion

When more data is sent than a network can handle, it causes network congestion, resulting in delays as packets queue up to be transmitted.

Congestion is common during peak usage times or when many users access the network simultaneously.

I’ve experienced such congestion several times during major events like the World Cup final or award shows. You can compare it to rush hour traffic on a busy highway. It’s common for cars to get stuck in traffic jams because there are many other cars on the road at the same time.

The more congested the network, the longer the wait for each packet to move.

A higher bandwidth might help with network congestion but ultimately has an insignificant effect in reducing latency.

More bandwidth is like more lanes on the highway. But just as more lanes on a highway don’t make individual cars move faster, more bandwidth doesn’t increase the packet’s speed.

3. Number of Hops

Each time data passes through a router, switch, or “hop,” processing time increases. More hops mean more potential delay points, which can increase overall latency.

I think of this scenario as a road trip with multiple stops. Each time you stop at a traffic light or intersection, you add time to your journey. Just as each stop adds time to your road trip, each router hop adds to data transmission time.

4. Packet Size

When data is sent in large chunks, it can lead to higher latency compared to smaller packets that can be processed and transmitted more quickly.

For example, if a user requests a web page with many images, CSS, and JS files, or content from multiple third-party websites, the server’s response will take longer than if the request were smaller.

5. Server Performance

High traffic demands or insufficient server resources can slow down your server. This leads to increased latency for users.

Let me set the scene for you with another analogy. Imagine arriving at a restaurant during peak hours with only server Nathan taking orders and serving food. As more customers place orders, the wait time will increase. Poor Nathan.

In networking, if a server can’t keep up with incoming requests, users will experience higher latency.

6. User-Related Issues

A user might be using an old device, have poor internet speed, or have another issue that increases the network delay.

In other words, no matter how traffic-free a road might be, traveling in an old, slow car would likely take longer to complete your journey.

What is a good latency?

Try as you might, you can’t reduce latency to zero. Trust me. However, it would be best to make it as close to zero as possible.

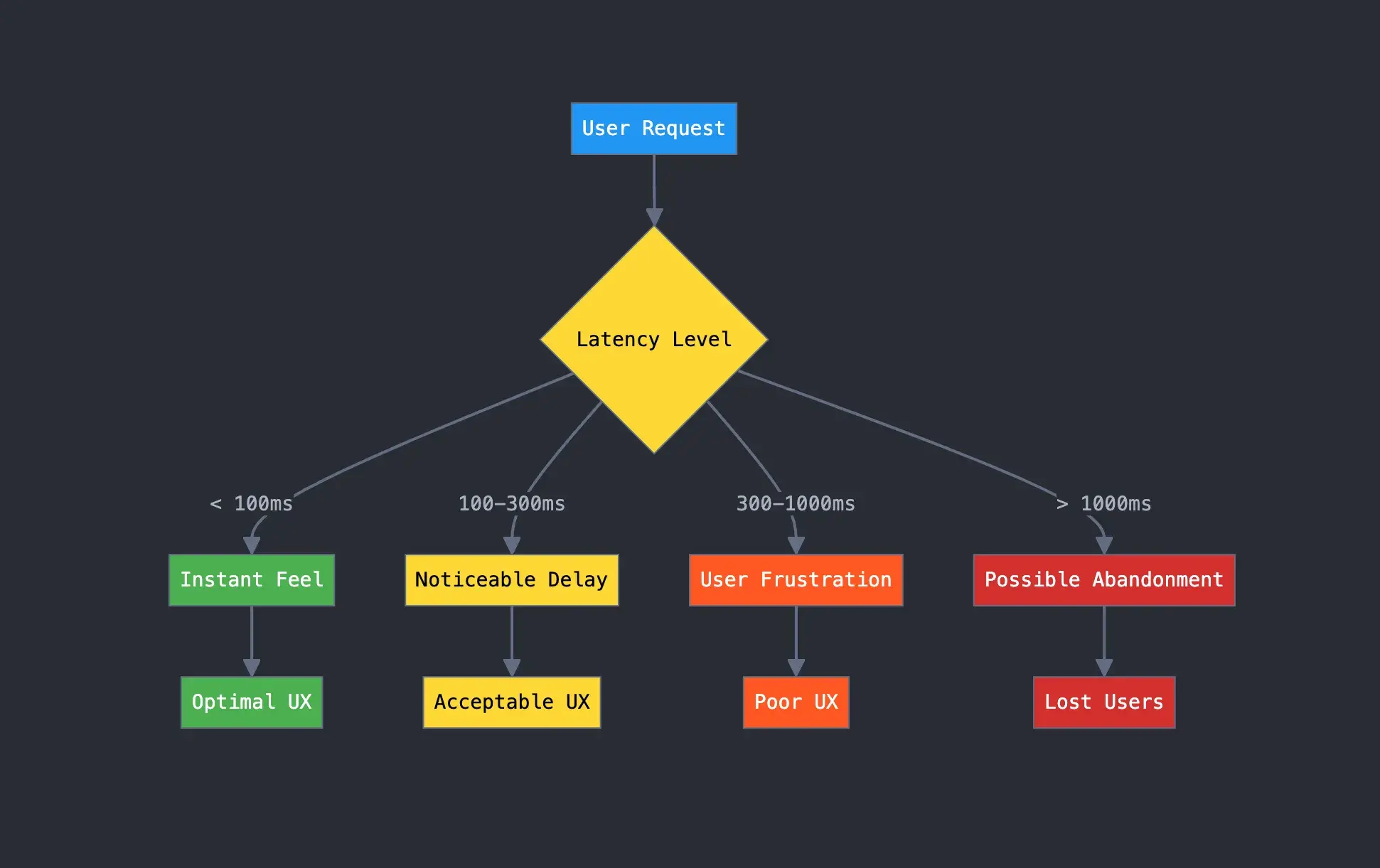

Like a good bounce rate, good latency is relative. Anything less than 100 milliseconds is generally acceptable. The optimal range is even lower, between 20 and 40 milliseconds.

[alt text] flowchart showing what good latency is and the likely result from different levels of latency

What are some use cases for low latency?

Here are three specific industries that rely on having low latency.

- Financial trading institutions. Financial trading companies need low latency for day-to-day operations. Traders often rely on real-time data to make split-second decisions. Given how volatile the markets can be, any time delay could result in financial loss or failure to maximize profit opportunities.

- Online gaming companies & communities. Nobody enjoys playing a game when there’s a noticeable lag. It sucks. Low latency ensures a user’s gaming experience remains seamless. The same applies to video streaming platforms. I’ve abandoned so many TV shows simply because the platform lagged when I was ready to watch.

- Video conferencing and collaborating tools. Businesses today rely more on video conferencing tools like Zoom and Google Meet for real-time communication. High latency often causes delays that interrupt the flow of conversations. Low latency, on the other hand, ensures clear audio and visual quality required for effective communication.

Why Improve Latency? (And Why It Matters to Businesses)

Page speed is critical to the user experience. So much so that all the way back in 2010, Google announced that page speed was a ranking factor for search. While they didn’t give an exact number, they did say their goal was less than half a second.

While hitting this target may seem difficult, several ways to optimize your website’s speed exist. A major way is to improve your latency.

Low latency ensures a website’s optimal performance and contributes to customer satisfaction. Everyone I know is happier when they can website content loads almost instantly.

In addition, many businesses need low latency to comply with regulatory laws and standards.

Let’s examine some steps you can take to minimize latency below.

How to Improve Latency

- Use a CDN.

- Minify CSS and Javascript file sizes.

- Compress your images.

- Reduce the number of render-blocking resources.

While users can take steps to reduce latency on their side, like upgrading to a faster internet plan and connecting to closer servers, we’ll focus exclusively on server-side solutions below.

1. Use a CDN.

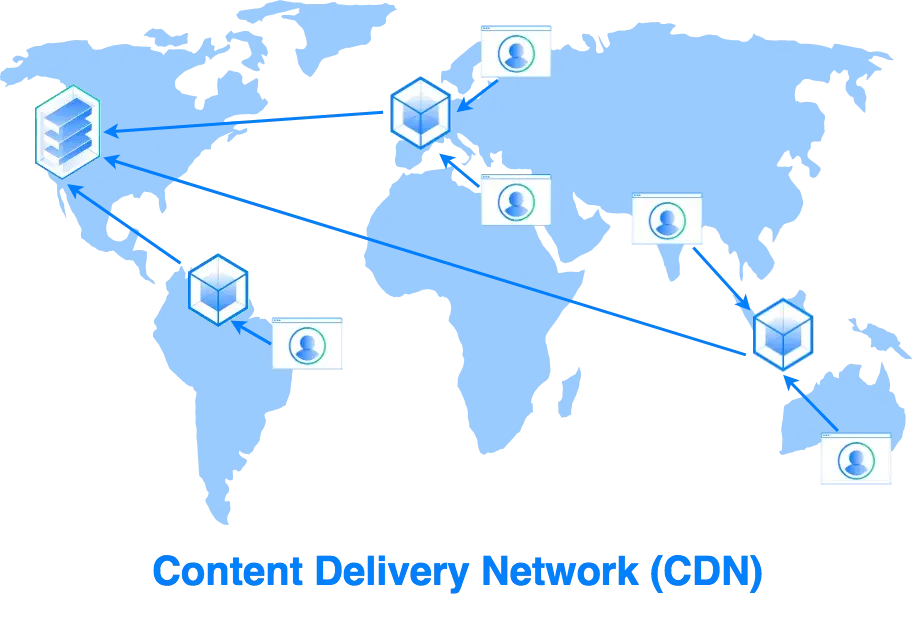

Since latency is related to the distance between a browser and a server, you can reduce latency by bringing the two closer together. While you can’t literally uproot your server location and bring it closer to every user, you can use a content delivery network (CDN).

A CDN is a distributed system of servers designed to deliver web content as quickly as possible to visitors, no matter where they are in the world.

With a CDN, you don’t have to rely on one server to send content all over the world. Instead, the CDN will tap different network servers closest to each unique visitor to deliver the requested assets — usually with limited packet loss.

Once the server closest to the user delivers and displays the requested content, that server makes a copy of those web assets. When another visitor in the same part of the world tries to access that content, the CDN can redirect the request from the origin server to the server closest to them. That server can deliver the cached content much more quickly because it has less distance to travel.

Here’s an illustration that I think helps explain it.

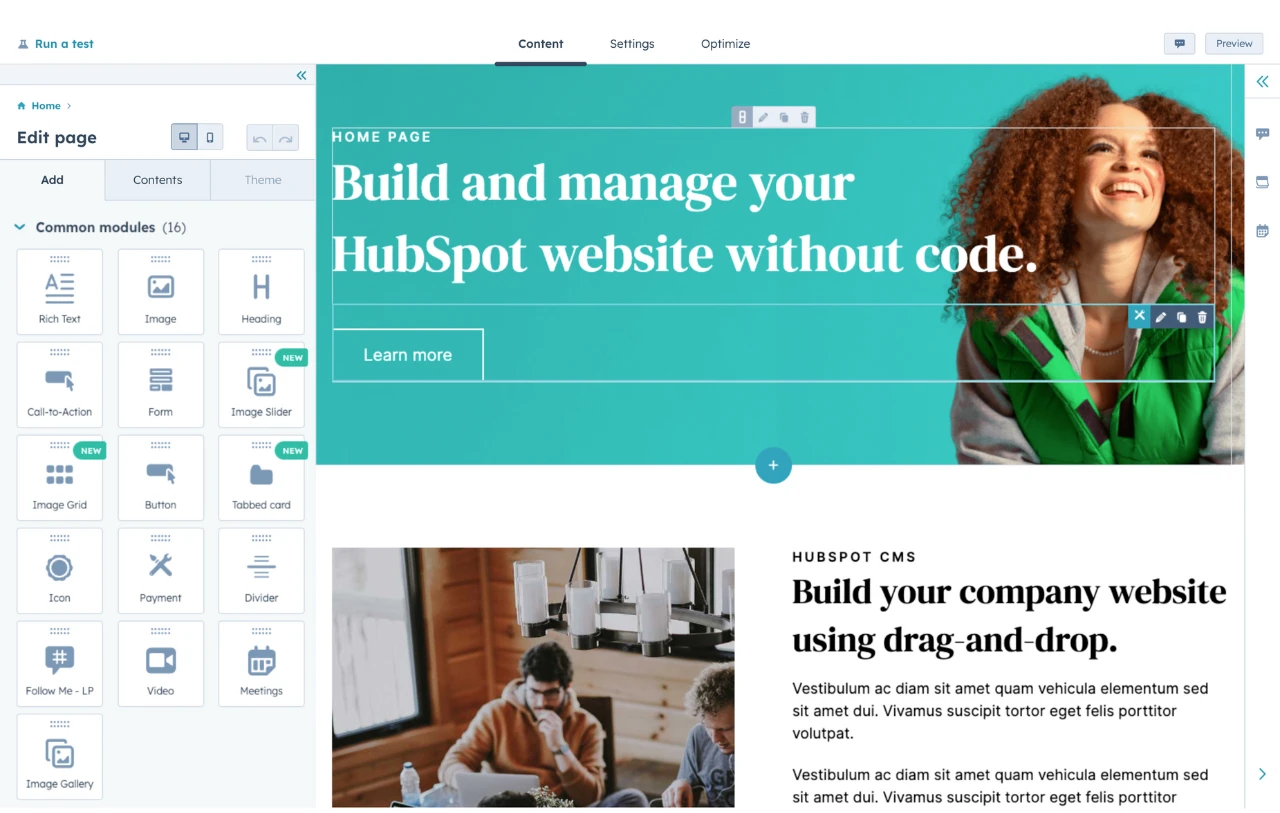

There are two ways you can use a CDN. You can purchase one from a CDN vendor (like Cloudflare) — or you can choose a website-building platform with a built-in CDN. HubSpot’s CMS (which is a part of Content Hub) has a website monitoring tool with a built-in, enterprise-grade CDN that automatically caches and serves your website’s static content from the edge servers closest to each user.

I spoke to Jeffrey Zhou, CEO of Fig Loans, about how they fixed latency issues on their website using a CDN. His team noticed that Fig Loans’ website load time often reached up to 10 seconds for many visitors — especially at month-end when customer activity spiked.

The slow website speed resulted in a 15% decrease in completed applications. Complaints about poor network performance, with specific mentions of “freezing screens,” also overwhelmed Fig Loans’ customer team. Only after restructuring their backend for load balancing and using a CDN was the latency problem resolved.

The results?

Jeffery says, “With this setup, we reduced average load times by 70%, bringing them under 2 seconds. This optimized speed led to a 22% boost in application completions, proving essential to our client experience and business growth.”

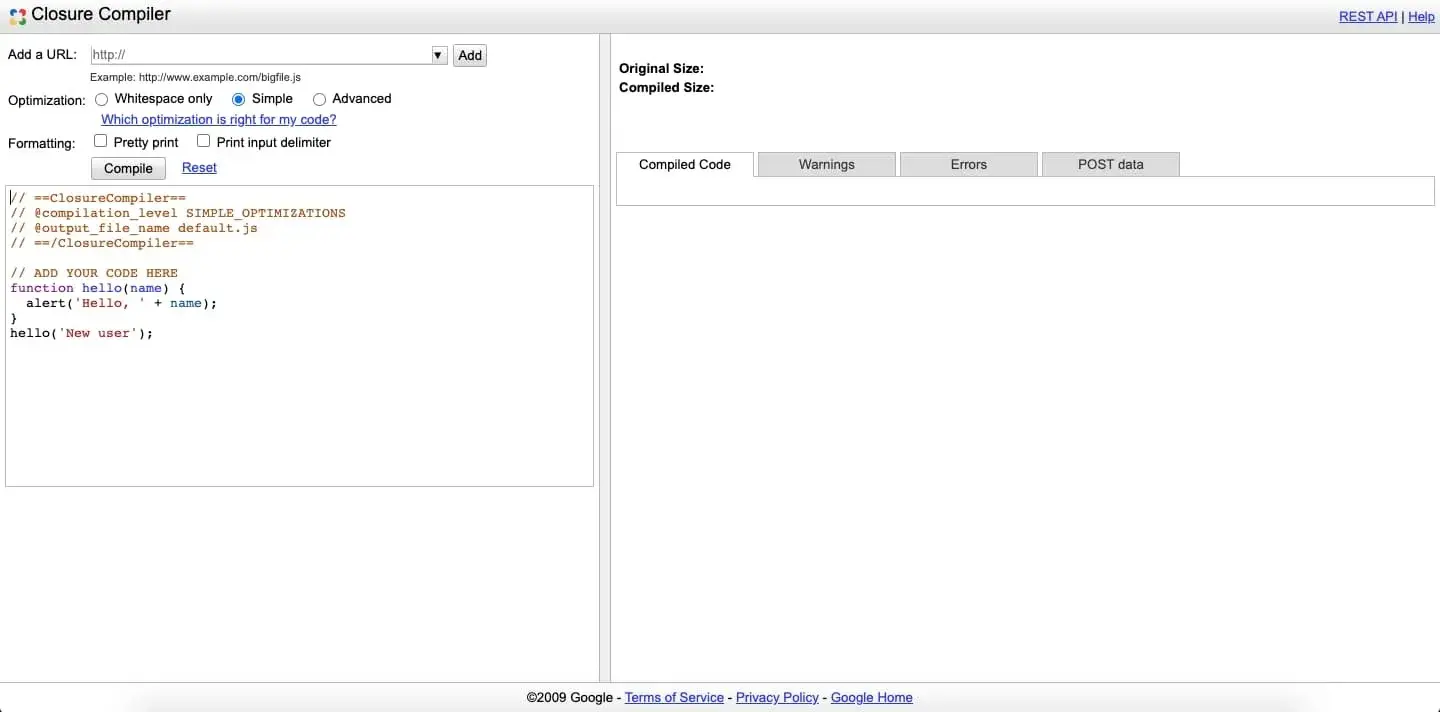

2. Minify CSS and Javascript file sizes.

Most web pages contain a combination of HTML, CSS, and Javascript. Whenever a visitor loads a page, CSS and JavaScript files need to be sent from the server to the browser.

That means more HTTP requests, which can greatly increase latency. While you can’t remove the CSS and Javascript from your web pages, you can reduce the size of these files. The smaller the files, the faster the files will travel from the server to the browser.

The good news is you can automate this process with a minifier like Google’s Closure Compiler service. All you need to do is add your code into the compiler, click the “Compile” button, and download the minified file.

This was the solution Tony Ciccarone, owner of 3tone Digital, used on his client’s site. He says that, “Before moving the CSS there was a ~1200ms delay on the page load. After moving and minifying CSS, we got the website back down to ~300ms.”

He adds, “After this fix, the site’s bounce rate decreased by up to 20% and conversion rate increased by 12%.”

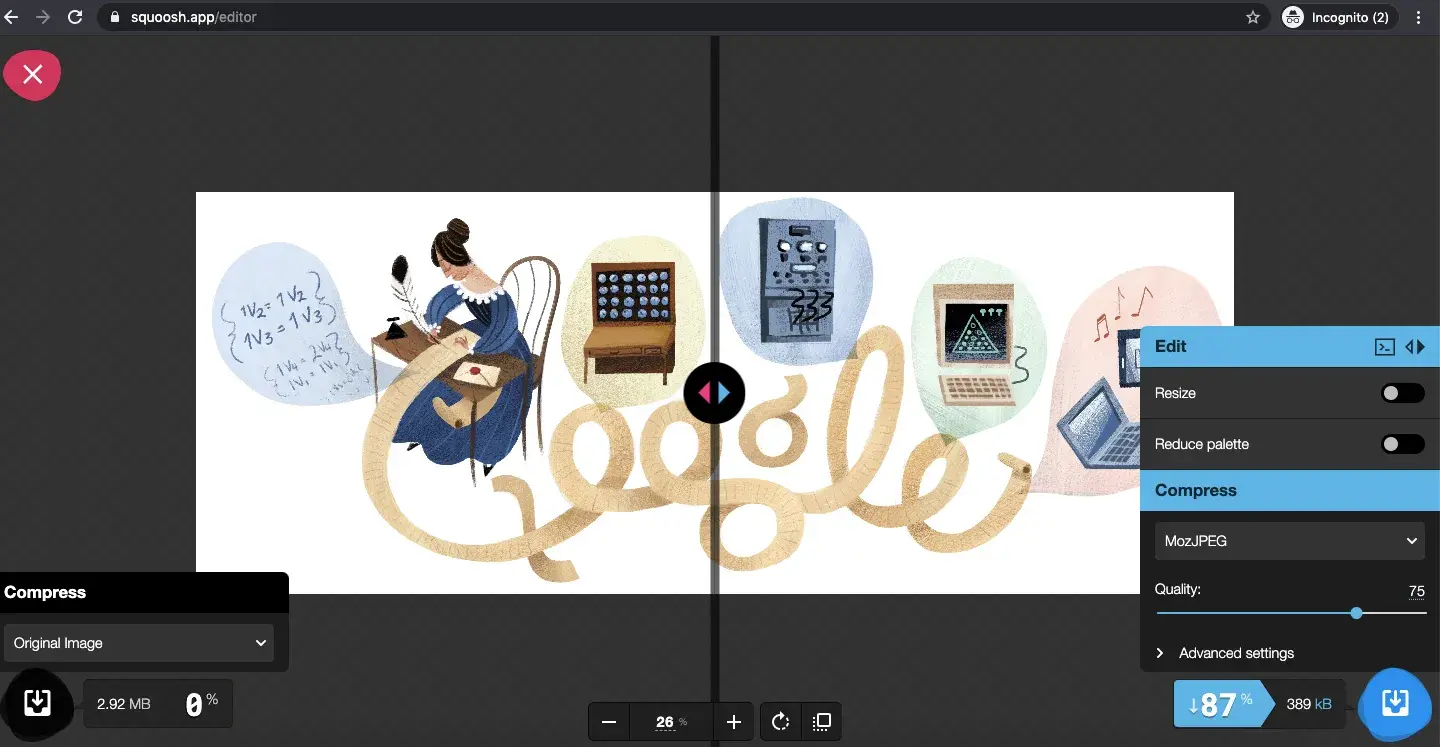

3. Compress your images.

Optimizing your images is another way to reduce your website’s HTTP requests.

Ideally, you should reduce each image’s file size to less than 100 KB. However, reducing the file size could sometimes affect the image’s quality. In such cases, I recommend keeping it as close as possible to 100 KB without sacrificing quality.

You can upload, resize, and compress your images one at a time using a tool like Squoosh or all at once with a tool like TinyPNG.

4. Reduce the number of render-blocking resources.

When loading a page, browsers download and parse each resource — including images, CSS, and more — before rendering them to the front-end user.

The browser deems specific resources, such as fonts and JavaScript files, critical, so it stops downloading and parsing other parts of the page until these resources are processed.

These resources are coined “render-blocking resources” and can significantly slow down your site. Reducing the amount of render-blocking resources on your site won’t technically reduce data latency, but it will improve the perception of load time on your site.

Minimize Your Latency

Understanding and improving latency is crucial for enhancing user experience and operational efficiency.

By implementing strategies like using a CDN, minimizing render-blocking resources, and optimizing file sizes, businesses can significantly reduce latency and improve customer satisfaction.

To stay on top of latency issues, I recommend investing in a comprehensive website monitoring solution like the one offered in HubSpot’s CMS. A tool like this will give you visibility into how your site performs for users worldwide, allowing you to identify and address any regional hotspots or bottlenecks.

By combining strategic latency-reduction techniques with best-in-class monitoring capabilities, you’ll be able to deliver a fast, reliable website experience that keeps your customers happy and your business competitive.

Editor's note: This post was originally published in December 2020 and has been updated for comprehensiveness.

Website Performance

![All the Image Sizes You Need to Know For Your Website [+Tips and Insights]](https://53.fs1.hubspotusercontent-na1.net/hubfs/53/image-size-for-website-1-20250205-643528.webp)

![7 Site Performance Challenges That Will Hold Businesses Back [Data + Expert Predictions]](https://53.fs1.hubspotusercontent-na1.net/hubfs/53/how-to-improve-lcp-1-20250121-126295.webp)