Sending email that performs is a cornerstone of remarkable marketing. Unlike some of the other inbound marketing channels we utilize, email is finite. After sending an email, you immediately get an initial sense of performance that trails off over time. So how do we as marketers make sure we're making the most of every email sent?

A/B testing is a great way to change different elements of your email and see how they affect performance. For example, if your main Call-to-Action (CTA) is blue, but the color of CTAs you have been using on your website is green and has been working well, you could try a variation of your email that changes the button color. However, A/B testing goes far beyond button colors and simple text changes and ultimately, if used in the right way, can help you get more out of every email you send.

Let's walk through how you can use A/B testing in your next marketing email to improve performance.

Please note: Email A/B testing is only available to HubSpot Enterprise customers. For a limited time, you can try HubSpot Enterprise free for 30-days.

1. Establish an hypothesis

No, you don't need to conduct any weird science to A/B test emails, but you should have an idea of something that will contribute to better performance. The example above about changing the button color is a great place to start. In our fictional example, given that CTAs on the website currently have a good conversion rate, our hypothesis is that if we change the CTA button in our email to the same color on the website the conversion rate will go up.

If you need help coming up with a hypothesis, here are a few places you can start:

- Analytics - Look at where email recipients are not converting. Are they not clicking a link? Maybe you should try making it more prominent, changing the color, or adding a CTA.

- Email Best Practices - Ensure you have read best practices for email, and that you are applying them where you can. Test some of the elements that apply to your audience to measure the performance.

- Email Newsletters / Subscriptions - If you're anything like me, you probably receive 15+ emails per day just from subscribing to blogs and newsletters. Take a look at some of your favorite emails and make some educated guesses on why that email worked, and why it did not (especially if you interacted with it and clicked on a relevant CTA).

2. Create your A/B email test

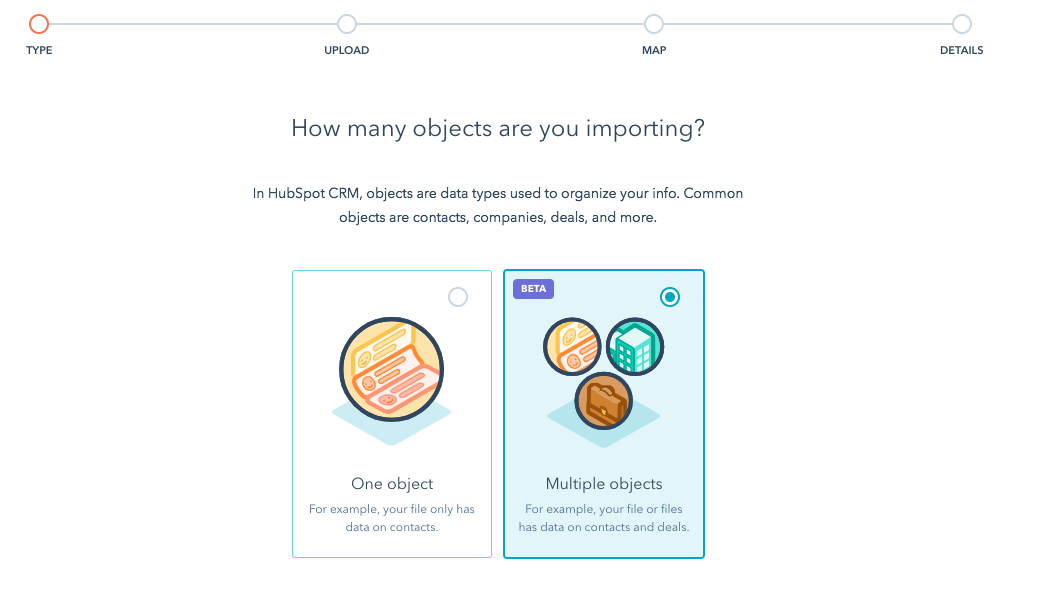

Let's walk through how to create an A/B test within HubSpot's Email App. Follow these steps below:

- Create a new email, or go into an email that you have created but have not sent yet.

- Click the A/B test button in the upper right of the email editor, as shown below:

- Choose a name for your variation content.

- After doing so, the area where the button for "Create A/B Test" will change to an "A" and "B". You can click on these to view and edit each version of your email and make the change you would like to test. Here is what the interface should look like after the A/B test has been created:

- Change an element of variation B of the email based on what you want to test. It's generally best practice when A/B testing to only change one element at a time, such as a button color, or changing your headline text. You should not make both the headline text and button color changes in the same email but test them separately. Doing this will allow you to icilate what is working and what's not.

- After making your changes, before sending your beautiful emails, you should make any changes to the options such as sample size. Generally, you should use an A/B test with a email list that contains more than 1,000 contacts and need to set the variation sizes large enough that there is a conclusive winner between the change you made in each email. For more details on how a winner is chosen, I recommend reading this in-depth Knowledge Base article.

3. Measure the results

After sending your email, you should look at how each variation performed. To measure the performance of each variation, click on the email and on the left-side, there will be a drop down box for viewing the performance that looks like this:

Ensure that you look at your key email metrics, such as clicks, opens, and any campaign tracking you also have. Based on this collection of metrics, you should be able to determine what helped make the winning variation perform better than it's counterpart.

So we're done, right? Not yet! In order to get the most of your new A/B testing superpowers, you need to continue testing, learning, and iterating on emails.

4. Create a testing & iteration process

Having a few questions that you answer based on the performance of each email can help you learn a lot about email and your customers. To continuously improve your emails, keep testing and learning from the performance of each email that you send. Challenge some assumptions that you may have made in the past, and you may find a collection of small wins that result in a series of emails that perform really well.

Here are a few standard questions, and a brief process you can use after hitting send and looking at results:

- How much better did the variation perform than the original email?

- Why do I think it performed better?

- What could I do to improve future emails based on what I learned?

- What hypothesis should I test next?

Record the answers to these in a central location and consistently refer back to your learnings as you create new emails to reinforce the behaviors that work well within your audience. After doing this with a successive number of emails, you will find trends and better-performing email. The most important piece to A/B testing is to keep testing, and just because you may see a massive increase from a single change, don't forget to keep going to improve future email performance.

Have you used Email A/B testing? If so, what's your process from A/B testing emails and learning from them? How do you apply what you learned to future emails?