If you frequently conduct surveys, there's a good chance you're familiar with the term "nonresponse bias."

Nonresponse bias describes people who don't answer surveys for intentional or unintentional reasons — and it can be the reason your surveys aren't yielding impactful results.

→ Download Now: Market Research Templates [Free Kit]

In this post, we'll discuss everything you need to know about nonresponse bias, including what it is, what causes it, and what you can do to avoid it when crafting surveys at your business.Table of Contents

What Is Nonresponse Bias?

Nonresponse bias occurs when participants are unwilling or unable to complete a survey. In this case, the responses of those who did not answer significantly differ from those who did fill out the survey.

Nonresponse bias can impact a survey question or the entire survey. Reasons for nonresponse vary from person to person.

To be considered a form of bias, a source of error must be systematic in nature. Nonresponse bias is not an exception to this rule. Suppose your survey method makes it more likely for specific groups of potential respondents to refuse to participate or to be absent during a survey. In that case, you've knowingly or unknowingly created a bias.

How could this happen?

Let's say a survey question asks if people have cheated on a standardized test. People who have cheated may feel awkward at the thought of admitting this, and therefore, refuse to respond to this survey. The result is nonresponse bias impacting your survey results.

Nonresponse bias accounts for the percentage of people who forget, don't understand, or simply don't want to take their survey — making it a common problem for researchers.

Response Bias vs. Nonresponse Bias

You might be wondering if this is the same thing as when participants purposefully provide researchers with misleading information. I'm here to tell you that it's not. That would actually be called response bias, which focuses on the participants who respond to survey questions inaccurately or untruthfully.

For example, if a participant felt pressured by a survey maker to say their experience with a brand was great, that could create response bias. However, if the participant felt intimidated by the question and decided not to answer, that would be considered nonresponse bias.

Common Causes of Nonresponse Bias

Nonresponse bias occurs due to factors your business should be aware of. Let's now review some of these factors and examples of nonresponse bias.

Poor Survey Construction

If your survey is too long or difficult to understand, participants will quickly abandon it. Research reveals that survey completion rates drop between 5-20% when it takes more than seven or eight minutes to complete.

Pro tip: Ensure your surveys are brief, user-friendly, and engaging if you want to keep your response rates high.

Incorrect Target Audience

Sometimes, you're not targeting the right audience with your survey. When this occurs, it gives way to nonresponse bias. They might not be interested or have insufficient knowledge to answer the survey questions. As such, leveraging your customer data when distributing surveys and target groups most likely to participate is essential.

Pro tip: You can try changing your survey's trigger if your audience doesn't seem engaged.

Refusal to Participate

Some customers dislike participating in a survey. We've all fallen into this category at one point or another.

In these cases, timing is critical. If a person is adamant about not participating in your survey, then it may not be the right time to target them and ask them to do so. It doesn't mean that they'll never fill out one of your surveys. Rather, you should find the right time and channel to connect with these individuals to gather their feedback.

Pro tip: Adjust your survey timing and use the correct channel. You can also offer an incentive to motivate your customers to participate.

Failed Survey Delivery

Sometimes, the survey simply doesn't make it to the recipient. If you're sending a survey via email, for example, it could get lost in a spam folder. This still gets recorded as a nonresponse. This has happened to me before, and it can get frustrating.

Pro tip: The best surveys have safeguards that account for failed deliveries. For instance, you can add a tracking pixel to your emails to confirm that your messages reach your participants. Or, if you're managing a mail-in survey, you can include a return address for any surveys that don't reach a mailbox.

A simple system like this will help you keep track of surveys that were never completed because the intended participant never saw them.

Accidental Omission

Another common cause of nonresponse bias is forgetting to complete the survey altogether. Don't take it personally — it happens!

While it may seem that your intended participant was bored by your survey and skipped it, this isn't always the case. Instead, the participant may have been distracted and pulled away from the form before they could complete it. While it's hard to prevent these incidents, ideally, they should only make up a small fraction of your nonresponses. Plus, keeping your survey brief can help eliminate the likelihood this occurs, as visitors will be able to briefly wrap up the survey before averting their attention.

Pro tip: Send reminders that nudge your audience to complete the survey even though they might have initially forgotten about it.

Examples of Nonresponse Bias

Let's see this concept in action with common examples of nonresponse bias.

1. Outdated Customer Information

1. Outdated Customer Information

Let's say you wanted to send a survey to a group of customers who attended one of your events two years ago. While you have all their email addresses recorded in your CRM at the time, you have yet to gather any new information on them since their last event.

When you send your survey, you notice that your delivery and open rates are extremely low. Turns out, your customers have changed their email addresses and aren't checking the inboxes that you have listed under their names.

This would be considered nonresponse bias. While these customers were technically sent the survey, they never had a real chance to interact with it.

2. Requesting Sensitive Information

In this example, you're running a cybersecurity company working on a new way to encrypt email addresses. To understand your customers' current security setup, you distribute a survey asking them to provide passwords to different accounts and their reasoning for choosing those words or phrases.

To your surprise, no one completes your survey. In fact, a few customers even call your support team to report your survey as potential fraud. (Whoops!)

3. Forgotten Survey

For this example, let's say you sent a mail-in survey to your customers about a month ago. Your survey's instructions are to submit the form by the end of the month so participants can receive a special promo offer.

Just as the end of the month approaches, a global crisis strikes, and your company quickly pivots its marketing, sales, and customer service efforts to adapt to the new environment. You decide to put your survey on hold because customers are more focused on other needs than providing feedback.

This would also be considered nonresponse bias because participants never got a real chance to submit the survey. If they waited until the end of the month, they wouldn't have had the same opportunity to collect the promo offer as those who submitted the survey earlier.

4. Using the Wrong Channel

Say your company wants to figure out what older folks in the community think about the recent changes to the healthcare system. You decide to send out the survey using social media and your website.

After collecting data at the end of the survey, you find that only a handful of people filled out your survey forms. Why? Your target audience may not be as familiar with navigating the social platform you chose or your highly technical website.

Given the low number of responses, conclusions drawn from such data are most likely inaccurate.

5. Focusing on the Wrong Audience

Nonresponse bias can occur when you send your survey to the wrong people or audience.

For example, let's say you want to open a vegan restaurant in the middle of town. So, you send a survey to your database of email addresses belonging to residents of that town, asking about menu opinions.

Everyone who answered the survey loved the idea of a vegan restaurant and sent in what they'd love to see on the menu.

However, you noticed that a significant number of the surveys were left unanswered. It turns out that most of the town's residents prefer to eat meat and, therefore, have no opinion on a vegan menu.

Now that you're familiar with how nonresponse bias can affect your surveys let's review what you can do to avoid it.

How to Reduce Nonresponse Bias

- Reconsider your survey's trigger and timing.

- Optimize your survey's design.

- Review your survey questions.

- Leverage customer data.

- Provide options for omission.

- Reward customers with incentives.

- Keep the survey length short.

- Make sure the information is confidential.

- Create a path with the least resistance.

- Prime your audience.

- Take your time.

1. Reconsider your survey's trigger and timing.

You want to present your survey at a moment when participants are most likely to respond. Furthermore, the survey should only take a few minutes to complete. Approaching people at the right time and in the right way will make it easier for you to encourage them to participate.

Your customer journey map is an excellent resource you can use to pinpoint the right moment to dispatch your survey. You can look for moments of delight where customers want to share an experience or for pain points where people want to voice their criticisms.

Targeting these memorable interactions is the key to approaching participants at the optimal point in their customer journey.

2. Optimize your survey's design.

Survey length can be one of the most significant factors that determine whether participants fill out your survey. The ideal survey length is no more than 20 questions and is 14 minutes or under.

Anything longer than that will lead to lower response and completion rates.

Another important factor to consider is your survey's design. If you stack questions on top of each other, viewers may be intimidated by all the scrolling they'll have to do just to reach the end of the page. User experience makes a difference when it comes to reducing nonresponse bias.

Here's a tip: If you can access customer feedback software, you can trigger questions one at a time so customers can focus on each one individually and won't be overwhelmed by a 30-question list.

3. Review your survey questions.

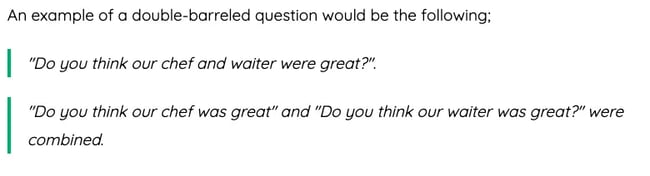

The type of questions you're asking will also influence participation. Long-response answers and comment boxes get exhausting over time. Double-barreled questions, like the example below, can confuse participants and lead to abandonment.

Your survey should primarily include close-ended questions like multiple choice or Likert scales. These give customers a fixed number of responses, making the survey much easier to complete.

4. Leverage customer data.

Targeting the right participants for your survey can also play a major role in its success. If you have a customer relationship management (CRM) tool, you can leverage customer data and send your survey to people who would be more willing to complete it.

Buyer personas would be very helpful in this situation. You can use them to identify target audiences more likely to participate in a survey. (Psst: If you need help creating your buyer personas, HubSpot offers a free tool to do so.)

You can also look at past interactions with individual accounts to see if anyone recently interacted with your brand and may want to provide feedback.

Not only can this help you attain valuable insights from your customers, but it can also help you connect with accounts potentially at risk of churn.

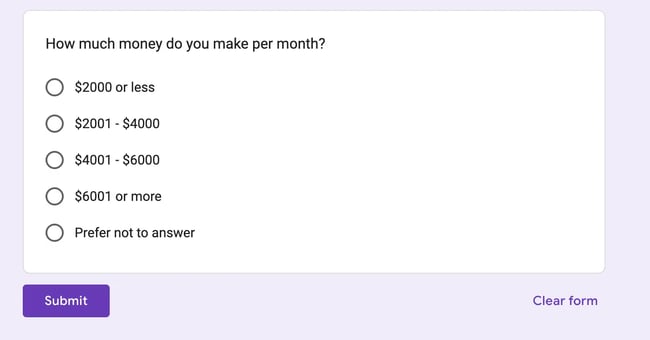

5. Provide options for omission.

While your survey should primarily offer closed-ended questions, it's also important to include options to omit individual answers. You can either not require all questions to be answered or provide a dedicated multiple-choice option for participants to omit each question.

Here's an example.

When asking for feedback, your customers shouldn't feel pressured or forced to provide information. Most would rather abandon your survey altogether than willfully answer a question that makes them uncomfortable.

Including this omission option provides a safety net for anyone who wants to skip a specific section. After all, it's not worth losing all of their answers over a single survey question.

6. Reward customers with incentives.

Offer incentives for completed surveys to boost engagement. This could be a discount or a small prize obtained once a participant fills out your form.

If you need help with what to offer, an excellent place to start is with your customer loyalty program. You can borrow some of its rewards or promotions and apply them to your survey.

If your loyalty program is point-based, you can offer participants loyalty points that can be added to their accounts.

This is a great way to acquire new signups for your loyalty program and increase overall customer retention.

7. Keep the survey length short.

Your survey's length is likely the deciding factor for most of your participants. Time is precious, and most people don't want to spend their time answering survey questions. It is up to you to get them to participate in your survey by keeping it as concise as possible.

By keeping your survey relatively short, your audience will be able to complete the survey quickly. You can always dispatch a follow-up survey at another time.

8. Make sure the information is confidential.

Some participants might refuse to answer a question because they fear their responses will be made public. You can allay their fears by letting them know they will be anonymous and their information will be strictly confidential.

9. Create a path with the least resistance.

Sending survey questions to your audience is already like an interruption to their day. So, to reduce the chances of them leaving your survey unanswered, you must remove any resistance that stands in their way.

For instance, if the survey is to be answered on a website, ensure the website doesn’t drag and is easy to navigate. The same principle applies to any other medium you use for your surveys — eliminate as many hurdles as possible.

10. Prime your audience.

Like how studios use trailers to whet an audience’s appetite for an upcoming movie, you can also create anticipation about your next survey.

Granted, a survey doesn’t sound as exciting as the latest blockbuster, but when an audience knows what is expected in a survey (like how long it’ll take and what it’s about), they’ll be more prepared to answer.

A simple email might be all you need to set expectations and prime your audience.

11. Take your time.

People are busy! They might not have the time to complete your survey on the day or week you send it out.

Instead of working with just the data and responses you can gather within a short time, be flexible. This means allowing ample time for your audience to fill out the surveys — that way, you’ll have more responses.

Nonresponse Bias and Your Surveys

Nonresponse bias is very common and can be detrimental to survey results. People increasingly refuse to participate in surveys, leading researchers to use "convenience samples." Convenience sampling is a method where survey researchers collect data from willing and available participants.

When that pool of available participants isn't the right fit for the survey, it can result in misleading or incorrect discoveries. Once you've handled nonresponse bias, you can continue to master different types of surveys and questionnaires. Happy surveying!

Editor's Note: This post was originally published in October 2022 and has been updated for comprehensiveness.

Survey Creation

![16 best free online survey makers and tools [+ recommendations]](https://53.fs1.hubspotusercontent-na1.net/hubfs/53/free-online-survey-maker-1-20251028-2654831.webp)

![How to conduct survey analysis like a data pro [all my tips + secrets]](https://53.fs1.hubspotusercontent-na1.net/hubfs/53/survey-results-1-20241031-6355381.webp)

![Leading questions: What they are & why they matter [+ Examples]](https://53.fs1.hubspotusercontent-na1.net/hubfs/53/leading-questions-hero.webp)

![How long should a survey be? The ideal survey length [New data]](https://53.fs1.hubspotusercontent-na1.net/hubfs/53/how%20long%20should%20a%20survey%20be_featured.png)