You may have heard of a load balancer and an API gateway, but do you know what sets them apart? Both are essential components of web infrastructure architecture, yet their differences can be confusing for those who don't work in technology.

Think about it this way: the load balancer is like a ticket booth operator at a major theme park, and the API gateway is like a turnstile located near the entrance. They both manage traffic coming into your website or application; however, they have different roles in managing this traffic.

In this blog post, we'll identify how each gateway works differently so that you can better understand which option best suits your business needs.

API Gateway Overview

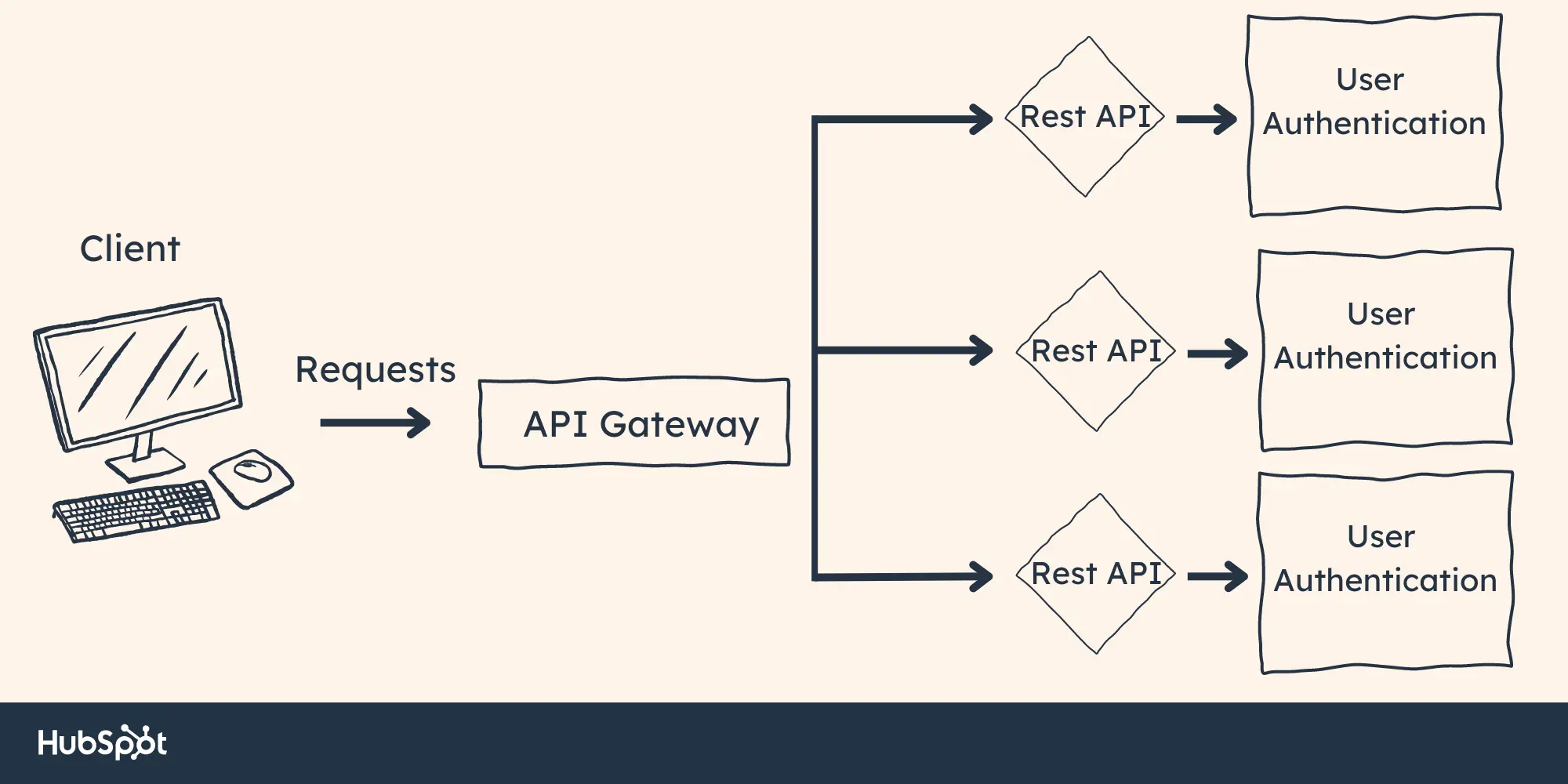

An API gateway is an intermediary between the client and the server. It is a single entry point for multiple services within your web or mobile application. You can think of it as a gatekeeper that manages access to your backend services, such as databases, microservices, functions, etc.

The main purpose of an API gateway is to provide a secure and consistent access layer for client applications. It can also perform various tasks, like rate-limiting requests, authenticating users, logging requests/responses, enforcing security policies, etc.

API Gateway Functions

The API gateway can provide many functions. Here are a few of the main ones:

- Authentication – Authenticates incoming requests and verifies if the user has access to those resources.

- Authorization - Controls what data or services the user is allowed to access.

- Rate Limiting - Limits the number of requests that can be made within a certain time frame.

- Logging - Logs requests and responses to help with troubleshooting, debugging, and auditing.

These functions help ensure that only authorized users can access the resources they need and prevent malicious actors from overloading your system.

API Gateway Use Cases

API gateways are typically used in web/mobile applications, where there may be multiple services or APIs that need to be integrated. It helps simplify the process of securely accessing and integrating these services into one easily accessible interface.

For example, suppose you have an eCommerce website with multiple microservices that handle tasks differently (such as product catalog, orders, payments, shipping, etc.). In that case, an API gateway could provide a single entry point for all these services.

API Gateway Advantages

Leveraging an API gateway when designing a system with microservices offers various advantages. See a few below.

You can replace customized code.

Tasks such as authentication, authorization, routing, service discovery, and caching need customized code to be completed. However, API gateways can tackle these tasks for your system.

You can take care of clients.

The gateway can serve as a single entry point for client requests. This design is great for limiting the impact of errors and failures. If a microservice fails, the API will hold the client request or reroute it appropriately.

You can scale efficiently.

As your business grows, you will likely have to adjust your system. API gateways offer a layer of abstraction that protects external clients from any alterations to microservices. In this way, API gateways grant scalability and efficiency over time.

API Gateway Disadvantages

Although API gateways boast many solutions, there are certain disadvantages that you need to consider. Let's explore a few below.

A failure of the API can affect everything else.

API gateways can become a single point of failure in the microservices’ architecture. If the gateway runs into an error, all traffic to the microservices will be impacted. This can potentially negatively affect the user experience.

To avoid this risk, it's essential to design the API gateway for high availability and fault tolerance. Include redundant parts and failover mechanisms. This ensures that your system remains resilient in case of any issues.

Overloading increases processing times.

Another disadvantage involves API gateway routing. Because it routes incoming requests and responses, this can increase the system’s processing time. High-throughput operations and those that depend on real-time responsiveness can find themselves in deep waters when faced with even minor delays.

To combat this issue, optimize the API gateway configuration and infrastructure. Then, vigilantly monitor performance for any potential roadblocks.

Load Balancer Overview

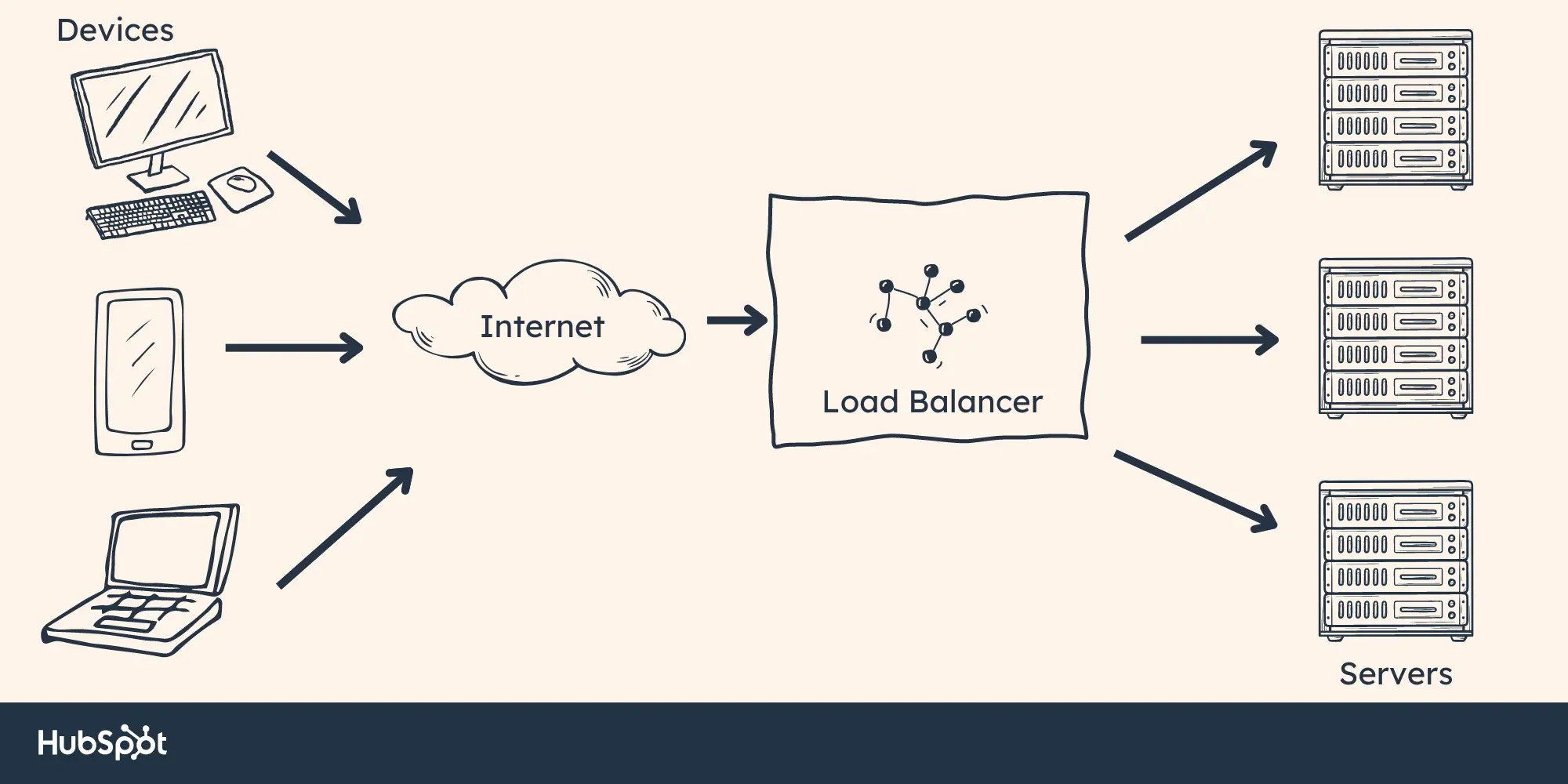

A load balancer is a service that distributes incoming traffic across multiple servers or resources. In most cases, it sits in front of two or more web servers and distributes network traffic between them. This helps ensure the optimal utilization of server resources and efficient content delivery to the user’s device.

In essence, a load balancer API ensures that requests are sent to the right server at the right time — effectively alleviating strain on any server by evenly distributing requests.

Load Balancer Functions

The primary role of a load balancer is to balance the load between two or more servers. It does this by monitoring the health of its backend servers and distributing traffic accordingly.

In addition, a load balancer can also provide other important functions, such as:

- SSL Offloading – Takes responsibility for managing encryption/decryption of HTTPS traffic.

- HTTP Compression – Compresses web pages to reduce the amount of data sent over the network.

- Content Caching – Stores frequently used content in a cache, so it can be quickly retrieved when needed.

Load Balancer Use Cases

Load balancers are commonly used in applications that require high availability, scalability and/or redundancy. Examples of use cases include:

- Distributing load across multiple web servers to improve performance

- Providing high availability for critical applications such as e-commerce websites

- Allowing for server redundancy in the event of downtime

Load Balancer Advantages

Load balancers are great for systems with high-volume traffic and one of the biggest advantages they provide is their potential for customization.

Here are four ways you can customize your load balancer to optimize your system.

Load balancers improve speed.

Load balancers distribute network traffic across all your servers, improving performance and speed. This feature prevents server overload.

Systems can keep running.

Load balancers ensure that systems remain available. They can detect when servers are down and intelligently reroute requests accordingly.

You can scale with ease.

Load balancers allow businesses to easily scale their system as demand grows. You can add servers to your system and adjust the configurations to include these servers in its routing.

Load balancers are great for security.

Security is important when it comes to protecting your data from malicious actors. Load balancers ensure that you have the best protection.

They both block unwanted traffic and spread it across servers to reduce DDoS attempts. Load balancers also encrypt information for optimal safety.

Load Balancer Disadvantages

Load balancers offer a variety of solutions. However, it‘s important to consider the potential drawbacks as well. Let’s explore a few.

System-Wide Crashes

Load balancers facilitate increased availability by sharing the workload between various servers. However, this can also be their own downfall.

If the load balancer fails, it could potentially cause a system-wide crash. This is true even if all other connected machines are still functioning properly.

Potential Delays

Depending on its setup, a load balancer may add extra latency to requests as they are sent to different servers. Although this delay might be minor, it could become apparent for certain users. This could result in poorer performance of specific services.

Price and Complexity

Establishing a load balancer may involve complicated procedures and require more hardware and technical expertise. This makes it an expensive and complex solution.

Now that we‘ve identified some everyday use cases for API gateways and load balancers, let’s compare them.

Comparison: API Gateway vs. Load Balancer

So, how do API gateways and load balancers differ? The main difference between these two services is that API gateways provide secure access to backend services, whereas load balancers distribute traffic between multiple servers. In short, a load balancer API distributes incoming requests while an API gateway authenticates and provides access to data sources or other applications.

Knowledge Tip:

When choosing between an API gateway and a load balancer, it's essential to consider your application's specific security and performance needs. Also, if you're dealing with multiple servers or services, it's worth considering using both an API gateway and a load balancer to ensure maximum coverage.

When deciding which option is best suited for your application, you'll need to consider the following factors:

- Functionality - Does your application need authentication or rate-limiting? If so, an API gateway may be the better choice.

- Performance – How many loads will your application be expected to handle? A load balancer may be the better option if you need to distribute traffic across multiple servers.

- Cost – API gateways are generally more expensive than load balancers, so budget is also a factor to consider.

Summary of API Gateway and Load Balancer

An API gateway vs. load balancer comparison can be boiled down to the fact that they both manage traffic entering your website or application but have different roles. An API gateway handles authentication and security policies, while a load balancer API distributes network traffic across multiple servers. When selecting a gateway for your application, consider which one best meets your specific security and performance needs.

With this information in mind, you can make a more informed decision when selecting an API gateway or load balancer for your application.

Api

![How to get a YouTube API key [tutorial + examples]](https://53.fs1.hubspotusercontent-na1.net/hubfs/53/%5BUse%20(1)-4.webp)

.webp)